260318 X(트위터) 모음

RT by @hwchase17: A couple weekends ago I attended the @sotalikesfuture x @ARIA_

RT by @hwchase17: A couple weekends ago I attended the @sotalikesfuture x @ARIA_

A couple weekends ago I attended the @sotalikesfuture x @ARIA_research hackathon.

Challenge 1: build a trustworthy multi-agent network. Agents that can identify each other, prove who sent what, delegate tasks with limits, and reject anything outside the trusted network.

The challenge mapped almost perfectly to what we'd been building at Kanoniv. Identity and trust for AI agents. The hackathon didn't inspire the idea, it confirmed the ecosystem needs it now.

So we shipped it.

Today we're open-sourcing kanoniv-agent-auth.

Every agent gets its own identity and keypair. Agents can delegate tasks to sub-agents with scope and budget caps, and authority narrows at each level, never widens. Every tool call is signed and verified before it executes. Revoke an agent's access and it takes effect immediately, no unwinding. Full audit trail on every action. Who did what, who authorized it, how deep in the chain.

Rust, TypeScript, Python. Same behavior across all three. MIT licensed.

github.com/kanoniv/agent-aut…

Shoutout to @ObadiaAlex and the team for organizing. The challenge was exactly the right question at the right time.

If you're building with agents that call tools, spend money, or talk to other agents, try it, tell me what breaks.

출처: http://github.com/kanoniv/agent-auth

RT by @hwchase17: exciting avenues where evals/specs become the base language to

RT by @hwchase17: exciting avenues where evals/specs become the base language to

exciting avenues where evals/specs become the base language to build agents:

- start with a base harness, pretty barebones

- specify a goal to your agent. build up exactly what you mean with the agent

- map your crafted goal to specs/evals with the agent. Together you think really hard about “what do I want the agent behavior to be”

- agent loops and adjusts the harness until a threshold of evals pass

- human in the loop today for cheating/overfitting

Evals are a great language to specify behavior

Every row in your Eval dataset is a little vector that shifts the agent definition towards behavior to make that Eval pass

출처: https://nitter.net/Vtrivedy10/status/2033929261329891685#m

RT by @hwchase17: Who can build the biggest sand castle for their agent?

🏖️🏰

RT by @hwchase17: Who can build the biggest sand castle for their agent?

🏖️🏰

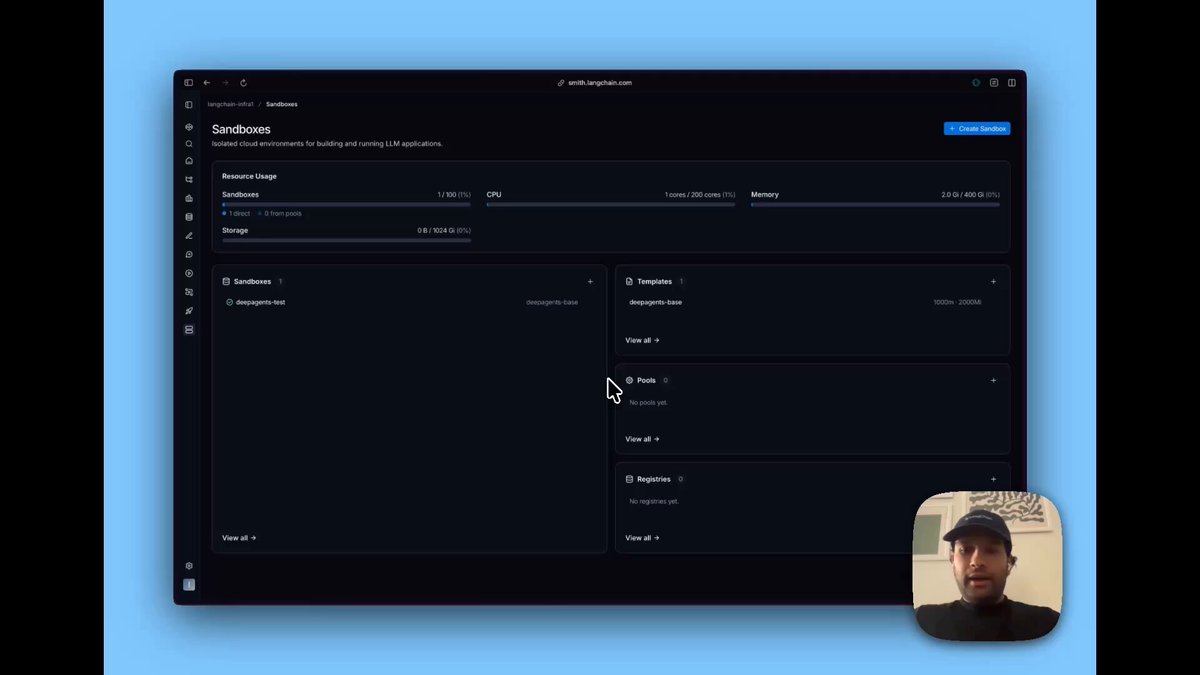

Who can build the biggest sand castle for their agent?

🏖️🏰

LangChain (@LangChain)

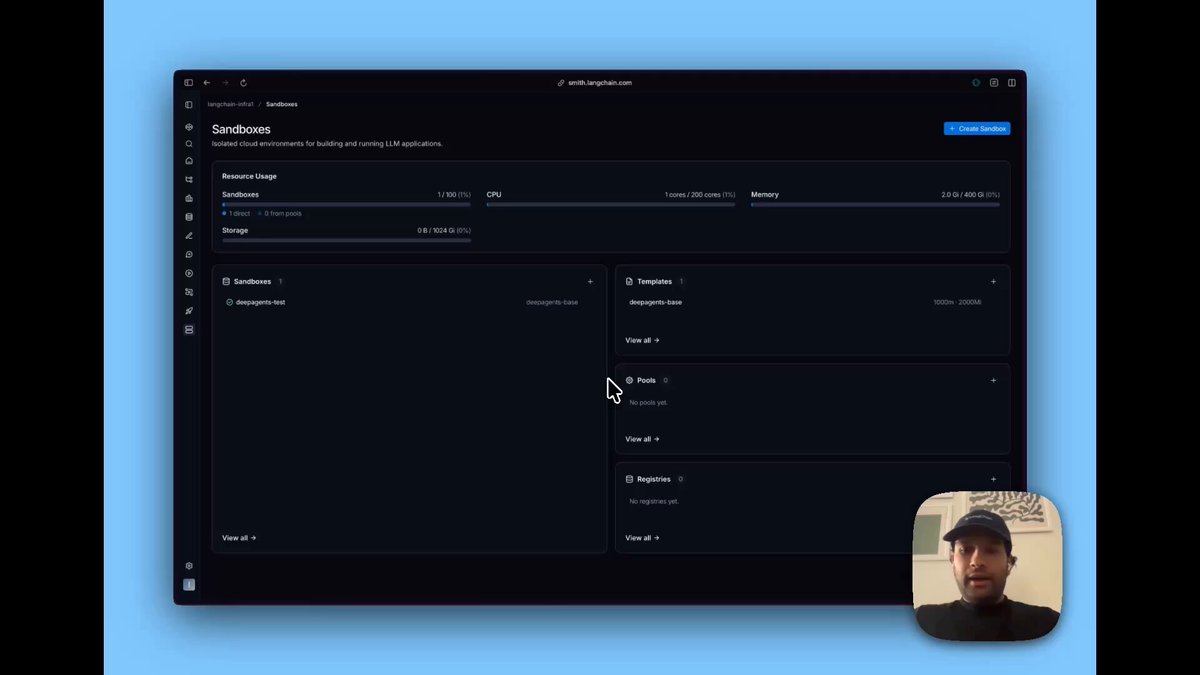

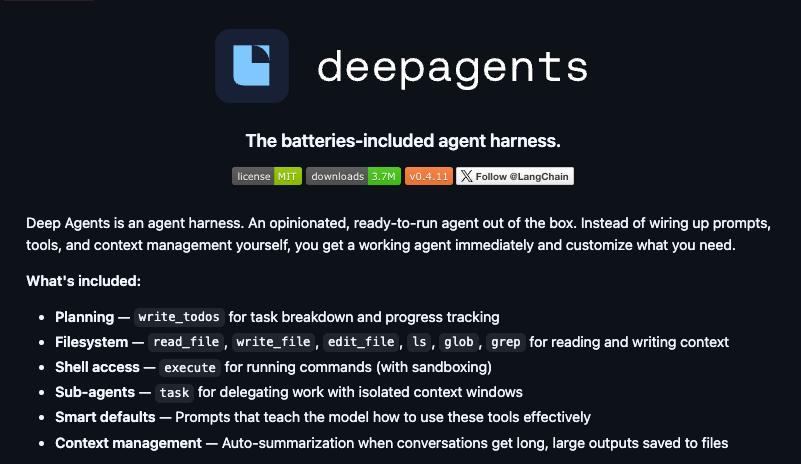

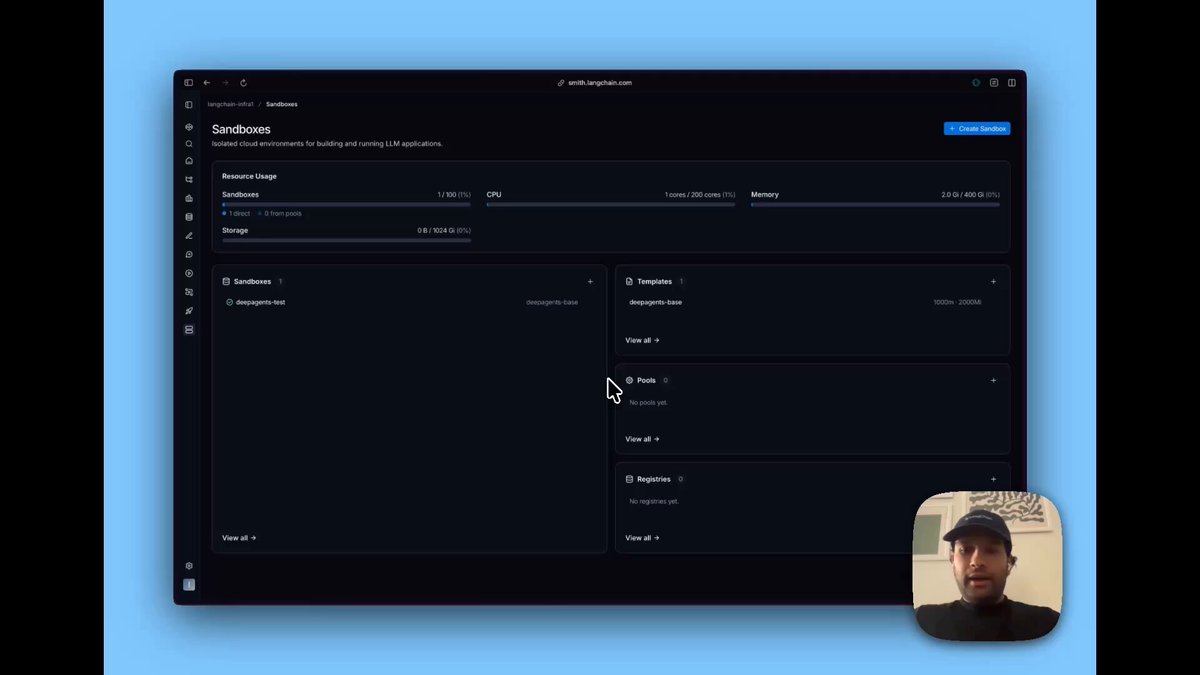

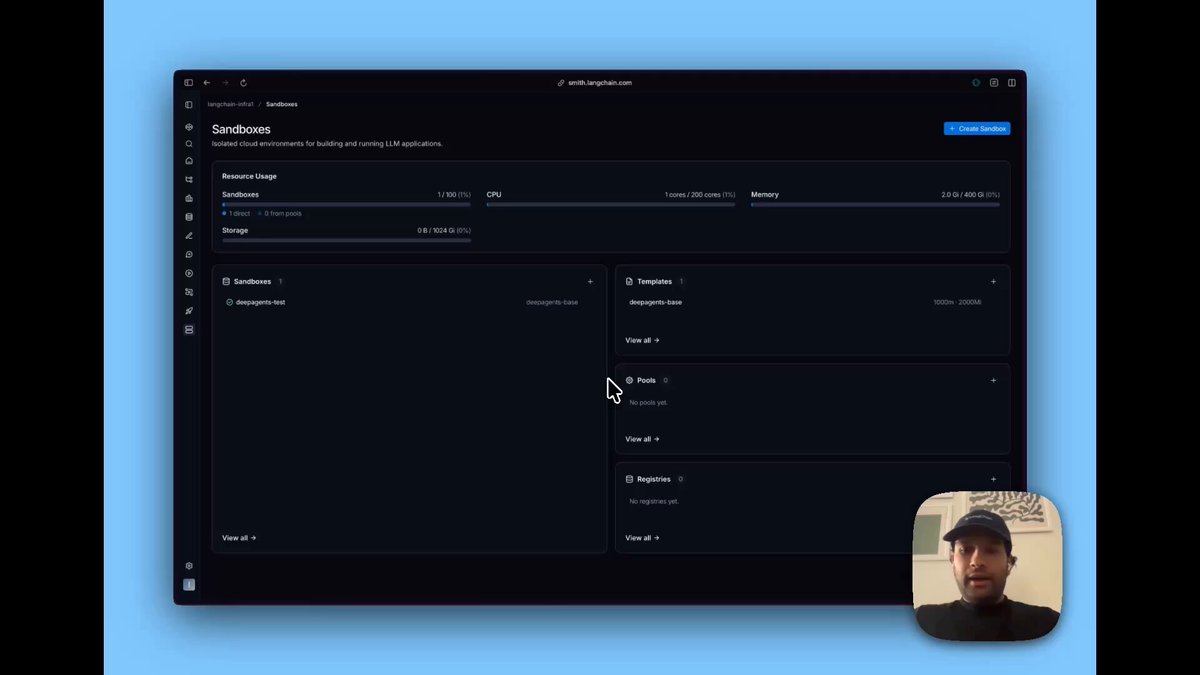

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/andrewnguonly/status/2033980464760070633#m

RT by @hwchase17: NVIDIA agents powered by @LangChain

RT by @hwchase17: NVIDIA agents powered by @LangChain

NVIDIA agents powered by @LangChain

NVIDIA Newsroom (@nvidianewsroom)

The future of AI isn’t just models. It’s agents.

#NVIDIAGTC news: The NVIDIA Agent Toolkit with NemoClaw and OpenShell helps teams build trustworthy, autonomous agents that can reason, adapt, and operate safely. nvidianews.nvidia.com/news/a…

출처: https://nitter.net/JLEllingworth/status/2033728062714745130#m

RT by @hwchase17: the beauty of OpenSWE and other background coding agents is th

RT by @hwchase17: the beauty of OpenSWE and other background coding agents is th

the beauty of OpenSWE and other background coding agents is that small tasks that weren’t worth the context switching costs now actually get done all via Slack

OpenSWE helps us cash in the easy wins

we build up the context in Slack, fire off the job with @ and review when we have a time

compound that multiple times per day across the team and you can measurably improve things that wouldn’t have got done otherwise

LangChain (@LangChain)

출처: https://nitter.net/Vtrivedy10/status/2033985089609347175#m

RT by @hwchase17: love that @LangChain is enabling more teams to deploy their ow

RT by @hwchase17: love that @LangChain is enabling more teams to deploy their ow

love that @LangChain is enabling more teams to deploy their own internal agents easily.

two big open source sandbox launches today with them and @mastra both enabling this exact functionality for customers

really exciting stuff in the harness / internal agent wars

Harrison Chase (@hwchase17)

loved this article by @kishan_dahya

as we built openswe (fully oss background coding agent) we compared our decisions to the mental model outlined here

try out open swe here: github.com/langchain-ai/open…

출처: https://github.com/langchain-ai/open-swe

totally agree - memory is a moat. which is why its important you own it

totally agree - memory is a moat. which is why its important you own it

totally agree - memory is a moat. which is why its important you own it

Mingta Kaivo 明塔 开沃 (@MingtaKaivo)

the internal agent is where the real IP accumulates. Stripe's Minions knows Stripe's API quirks, Ramp's Inspect knows Ramp's data models. an OSS framework is useful, but the institutional memory it runs on is the actual moat

출처: https://nitter.net/hwchase17/status/2034010391219736854#m

RT by @hwchase17: cobusgreyling.medium.com/cli…

RT by @hwchase17: cobusgreyling.medium.com/cli…

출처: https://nitter.net/CobusGreylingZA/status/2033992745656652180#m

RT by @hwchase17: @LangChain on 🔥

RT by @hwchase17: @LangChain on 🔥

@LangChain on 🔥

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/chrisnk14/status/2033996584523075819#m

RT by @hwchase17: Autonomous #software engineering system.

RT by @hwchase17: Autonomous #software engineering system.

Autonomous #software engineering system.

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/yaelmendez/status/2034008074717893041#m

RT by @hwchase17: we‘re ready to switch from local to cloud environments?

RT by @hwchase17: we‘re ready to switch from local to cloud environments?

we‘re ready to switch from local to cloud environments?

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/xeraa/status/2034008539312886266#m

RT by @hwchase17: Oh 😮 we SWE are switching up for real for real

RT by @hwchase17: Oh 😮 we SWE are switching up for real for real

Oh 😮 we SWE are switching up for real for real

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/samoye95/status/2034009666997326114#m

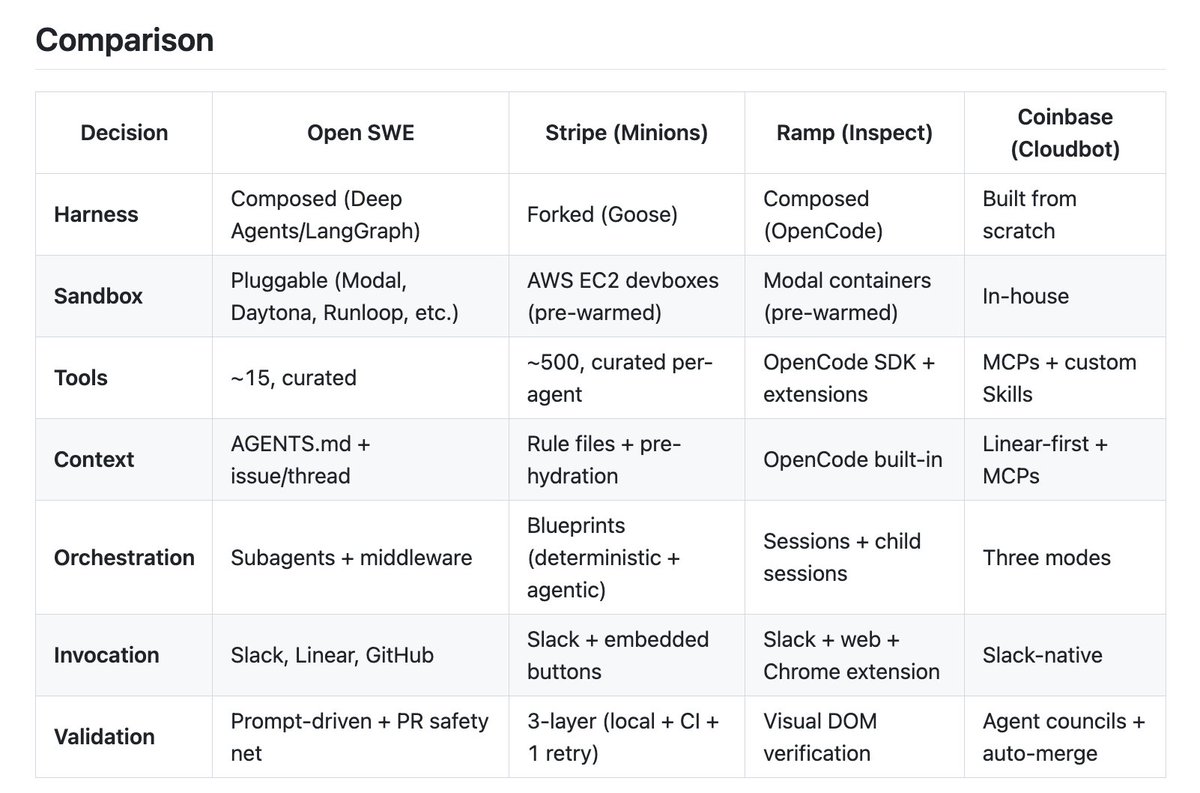

how does our OSS coding agent compare to others that Ramp, Stripe, Coinbase buil

how does our OSS coding agent compare to others that Ramp, Stripe, Coinbase buil

how does our OSS coding agent compare to others that Ramp, Stripe, Coinbase built?

In order to compare, we'll use this excellent analysis from @kishan_dahya (nitter.net/kishan_dahya/status/20…) to break down the components:

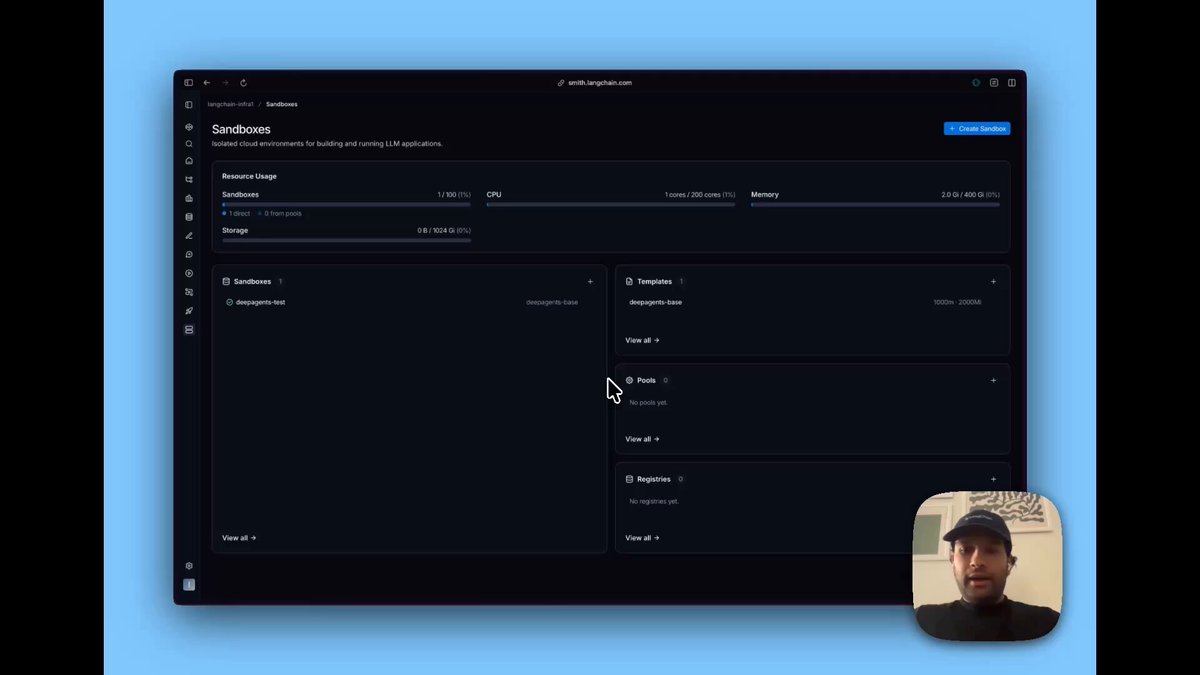

Harness: we use deepagents under the hood

Sandboxes: bring your own! we use our new LangSmith sandboxes, but also integrate with @e2b @daytonaio

Tools: a few basic ones (websearch, etc) on top of standard deepagents ones

Context engineering: agents.md

Orchestration: subagents and middleware

Invocation: slack, linear, github

Validation: all inside the agent

LangChain (@LangChain)

출처: https://nitter.net/hwchase17/status/2033978155334307878#m

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud c

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud c

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

LangChain (@LangChain)

출처: https://nitter.net/hwchase17/status/2033977192053612621#m

RT by @hwchase17: Announcing LangSmith Sandboxes by @LangChain.

Sandboxes give

RT by @hwchase17: Announcing LangSmith Sandboxes by @LangChain.

Sandboxes give

Announcing LangSmith Sandboxes by @LangChain.

Sandboxes give your agent a secure & scalable environment for running code.

The team's been shipping at @LangChain. Lots more to come. @MukilLoganathan absolutely cooked with this one 🔥🔥🔥

LangChain (@LangChain)

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/JLEllingworth/status/2033971161969852885#m

Very excited for this partnership!

Very excited for this partnership!

Very excited for this partnership!

Steve Jarrett (@stevejarrett)

Our great @orangebusiness CEO @AlietteML just launched the first trusted AI agents in Europe in our groundbreaking partnership with @LangChain.

LangChain and LangGraph agents will run on our #LiveIntelligence platform with on-premise LangSmith observation all running on GPUs hosted entirely in our latest sovereign Orange data center in France.

Excited for this state of the art solution for our customers requiring trusted AI solutions in France and beyond.

Thanks to the brilliant LangChain CEO @hwchase17 as well as the core Orange Trusted AI team including @dr_ujavaid , @miguelalva, @AnaBildea, and of course ‘The Father of Dinootoo’ Joachim Flechaire and our exec sponsors @bruno_zerbib and Aliette.

Bravo !

#TrustTheFuture #TrustOrange

출처: https://nitter.net/hwchase17/status/2033960048653930562#m

RT by @hwchase17: the filesystem-as-interface pattern is brilliant

could be rea

RT by @hwchase17: the filesystem-as-interface pattern is brilliant

could be rea

the filesystem-as-interface pattern is brilliant

could be real FS, could be LangGraph state, postgres, S3, Notion — doesn't matter

this is the unix philosophy applied to agents: everything is a file, every agent reads/writes the same way

unified interface > custom integration

출처: https://nitter.net/agentxagi/status/2033952307440689183#m

RT by @hwchase17: The biggest software bet Jensen made today: NemoClaw.

He call

RT by @hwchase17: The biggest software bet Jensen made today: NemoClaw.

He call

The biggest software bet Jensen made today: NemoClaw.

He called OpenClaw "the most popular open source project in the history of humanity."

Nvidia just made it enterprise-ready.

One command. Installs the full secure stack. Deploys an AI agent on your own hardware.

Jensen compared NemoClaw to what Windows did for personal computers.

Shruti (@heyshrutimishra)

In collaboration with NVIDIA, I am giving away:

→ $100 BrevCode credits (1 winner)

→ NVIDIA swag T-shirts (multiple winners)

Register for GTC (free): nvda.ws/3ONqdE5

Reply with your registration screenshot + session that you are attending within next 3 days.

All the best! 😁

#NVIDIA #GTC2026 @nvidia @NVIDIAGTC

출처: https://nitter.net/heyshrutimishra/status/2033805372104888424#m

RT by @hwchase17: Running agents requires isolated, ephemeral compute that’s fas

RT by @hwchase17: Running agents requires isolated, ephemeral compute that’s fas

Running agents requires isolated, ephemeral compute that’s fast to spin up. Huge ship from @MukilLoganathan and the team!

LangChain (@LangChain)

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/ankush_gola11/status/2033970148533801232#m

RT by @hwchase17: Open SWE 是 LangChain 今天开源的一个 Coding Agent 框架,核心思路是把 Stripe、Ram

RT by @hwchase17: Open SWE 是 LangChain 今天开源的一个 Coding Agent 框架,核心思路是把 Stripe、Ram

Open SWE 是 LangChain 今天开源的一个 Coding Agent 框架,核心思路是把 Stripe、Ramp、Coinbase 等公司各自摸索出来的生产实践模式,提炼成一套可复用、可定制的开源基础架构。

五个核心架构模式

1、隔离沙箱执行:每个任务跑在独立的云端沙箱里,Agent 在边界内有完整权限,但错误影响范围被严格限制,不会波及生产系统。支持 Modal、Daytona、Runloop 等多个沙箱后端。

2、精选工具集:Stripe 的 Agent 有约 500 个工具,但这些工具是精心筛选维护的,不是堆砌出来的。工具质量比工具数量更重要,这个结论反复在生产部署中得到验证。

3、Slack 优先触发:三家公司都把 Slack 作为主要入口,Agent 融入开发者现有工作流,而不是要求大家切换到新界面。Open SWE 同时支持 Linear 和 GitHub PR。

4、启动时注入完整上下文:任务开始前先读取 Linear issue、Slack 或 GitHub PR 的完整上下文,减少 Agent 在运行中靠 tool call 慢慢摸索需求的开销。仓库里的 AGENTS.md 文件也会注入 system prompt,把团队约定、架构决策等编码进去。

5、子智能体编排:复杂任务拆分给专门的子智能体处理,各自有独立的上下文,不会互相污染。配合 Middleware 机制实现「AI 驱动的灵活性」和「确定性逻辑的可靠性」分离。

Harrison Chase (@hwchase17)

how does our OSS coding agent compare to others that Ramp, Stripe, Coinbase built?

In order to compare, we'll use this excellent analysis from @kishan_dahya (nitter.net/kishan_dahya/status/20…) to break down the components:

Harness: we use deepagents under the hood

Sandboxes: bring your own! we use our new LangSmith sandboxes, but also integrate with @e2b @daytonaio

Tools: a few basic ones (websearch, etc) on top of standard deepagents ones

Context engineering: agents.md

Orchestration: subagents and middleware

Invocation: slack, linear, github

Validation: all inside the agent

출처: https://nitter.net/hongming731/status/2034030641906598125#m

RT by @hwchase17: LangChain just open-sourced a replica of Claude Code. It's cal

RT by @hwchase17: LangChain just open-sourced a replica of Claude Code. It's cal

LangChain just open-sourced a replica of Claude Code. It's called Deep Agents.

MIT licensed, model-agnostic, and fully inspectable - so you can finally see exactly how coding agents like Claude Code are built under the hood.

The black box just became a textbook.

GitHub: github.com/langchain-ai/deep…

출처: http://github.com/langchain-ai/deepagents

RT by @hwchase17: a new player has entered the game

sandboxes are the new arena

RT by @hwchase17: a new player has entered the game

sandboxes are the new arena

a new player has entered the game

sandboxes are the new arena

LangChain (@LangChain)

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/j_schottenstein/status/2033967067297378628#m

RT by @hwchase17: Really excited to finally launch this in private preview. We h

RT by @hwchase17: Really excited to finally launch this in private preview. We h

Really excited to finally launch this in private preview. We have a lot more planned re memory, security, and monitoring to provide the most secure ephemeral environments for your agents. If you are interested would love to chat!

We will be letting people off the waitlist incrementally but DM me if you want quicker access!

LangChain (@LangChain)

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/MukilLoganathan/status/2033969669665648676#m

this is a good question!

deepagents (our agent harness) has a sandbox abstracti

this is a good question!

deepagents (our agent harness) has a sandbox abstracti

this is a good question!

deepagents (our agent harness) has a sandbox abstraction. this makes it easy to define your own sandbox (including one that runs on prem)

docs.langchain.com/oss/pytho…

pupska (@pupska)

Is it possible to run it inside company internal infra perimeter?

Briefly looked through the post and seems only deployable to vendor-managed sandbox envs?

출처: https://nitter.net/hwchase17/status/2034016868261105861#m

RT by @hwchase17: Solid

RT by @hwchase17: Solid

Solid

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/seshadrinithin/status/2034016390877794459#m

RT by @hwchase17: 🤯🤯🤯

RT by @hwchase17: 🤯🤯🤯

🤯🤯🤯

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/rdjooo/status/2034010525378515218#m

RT by @hwchase17: So truth

RT by @hwchase17: So truth

So truth

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/JamesNguyen868/status/2034010823589564743#m

we saw very few companies building their own IDE or CLI

i think a lot will buil

we saw very few companies building their own IDE or CLI

i think a lot will buil

we saw very few companies building their own IDE or CLI

i think a lot will build their own background agents (integration is a lot harder)

hopefully this enables that

Cole McIntosh (@colesmcintosh)

the fact that it's fully oss is what makes this actually interesting. every company having access to this levels the playing field a lot

출처: https://nitter.net/hwchase17/status/2034015194859638981#m

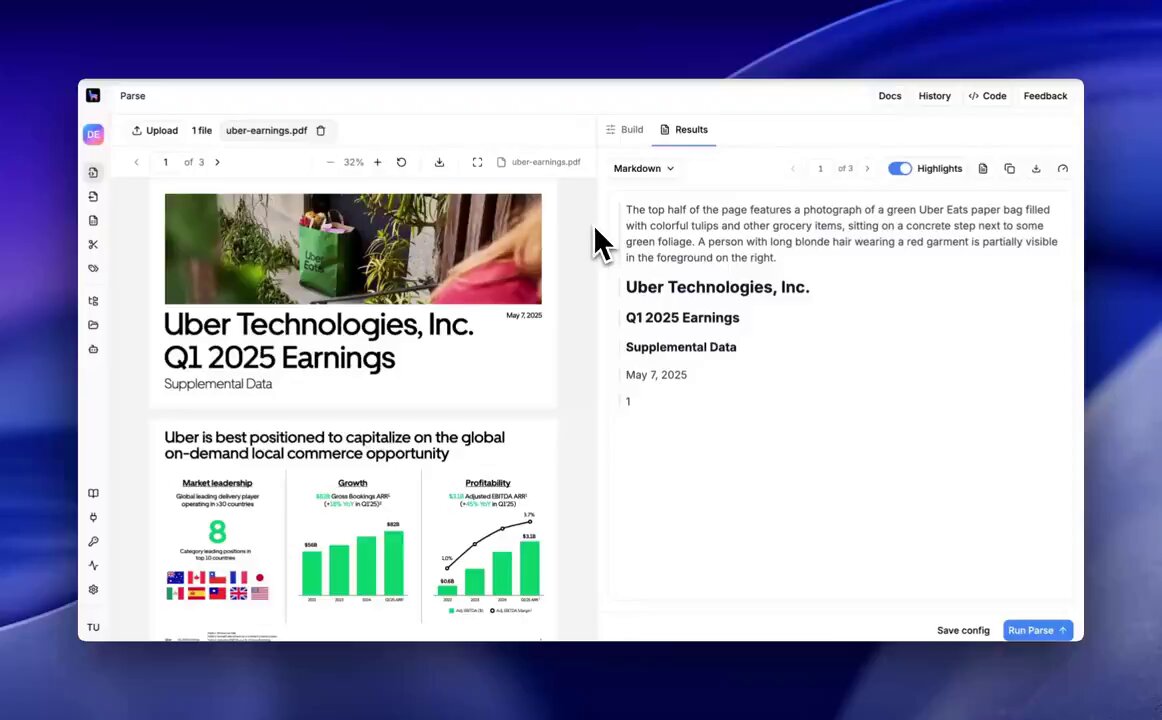

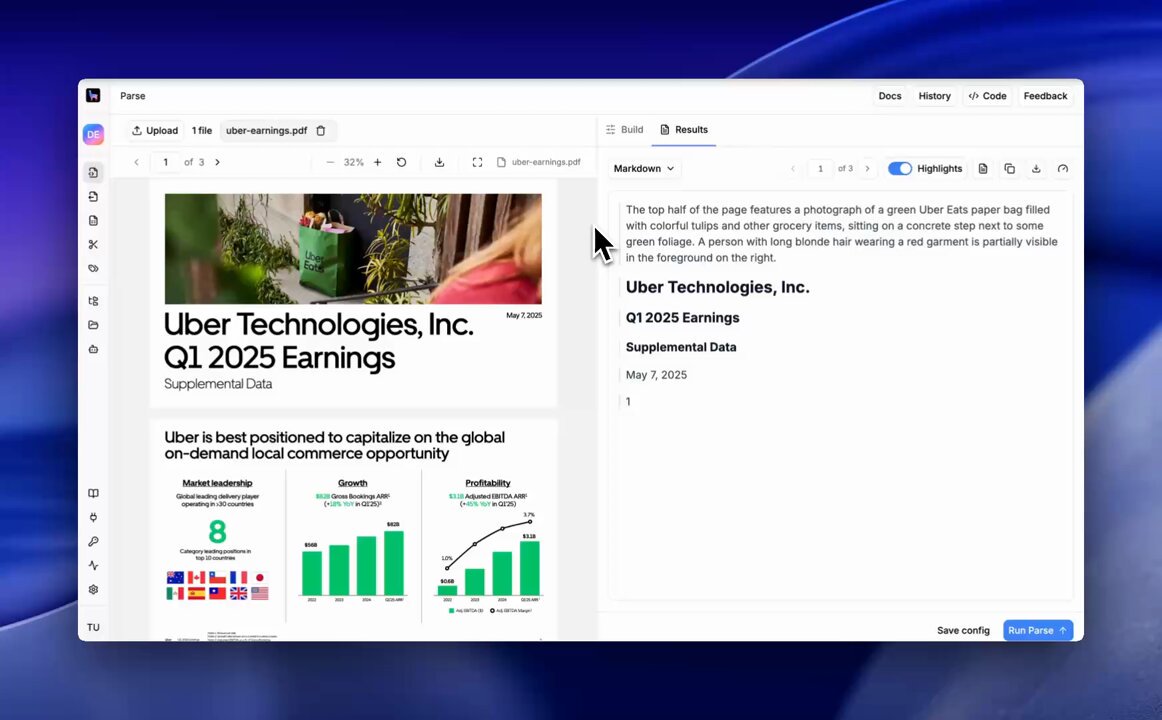

One of the hardest problems with using AI agents to automate meaningful document

One of the hardest problems with using AI agents to automate meaningful document

One of the hardest problems with using AI agents to automate meaningful document work (contracts, KYC, diligence, claims, and more) is not actually building the agent, but building the UI/UX audit trail so the human can understand decisions linking back to the source documents - in PDFs, Powerpoint, Word, etc.

This requires really good layout detection and segmentation, that you need to surface as *metadata context* to the AI agent, beyond any document -> markdown conversion.

We've built really-nice VLM-capabilities to identify and segment all the elements within your document: from tables to charts to form boxes. You can use this metadata to directly provide experiences where humans can analyze the source document even as decisions are being made.

Come check out LlamaParse! cloud.llamaindex.ai/?utm_sou…

LlamaIndex 🦙 (@llama_index)

One of the hardest problems with document parsing is trust. How do you know the output actually corresponds to what's in the source?

LlamaParse has visual grounding with bounding box citations for outputs, and it addresses exactly this.

Two ways to use it:

1️⃣ In the UI: hover over any element in the markdown output and it highlights the exact region it came from in the original document. Great for spot-checking complex tables, multi-column layouts, or figures where parsing can be tricky.

2️⃣ In the JSON output: every parsed element carries bounding box coordinates, i.e. the precise location of that element within the source file.

That means you can build applications that don't just surface an answer, but can point back to exactly where in a document it came from.

For due diligence, where auditability matters, this is a step up from "trust the output." You can verify it, cite it, and build on it.

Sign up to LlamaParse to get started: cloud.llamaindex.ai?utm_sour…

Video

출처: https://nitter.net/jerryjliu0/status/2034047686262087720#m

We hosted an executive dinner at NVIDIA GTC with @Modular.

600+ people signed u

We hosted an executive dinner at NVIDIA GTC with @Modular.

600+ people signed u

We hosted an executive dinner at NVIDIA GTC with @Modular.

600+ people signed up, and we had to turn away more than 500. We had a packed house 🔥

This is one of the only dinners where we discuss the impact of AI agents across the entire stack - from infrastructure/systems to context and agentic engineering. Some of the topics we discussed:

- Is the future composed of general models or specialized models?

- Are agents a systems problem or model problem?

- How are non-technical users adopting agent tooling like Claude Cowork?

- Where do humans spend the most time on repetitive document work?

Massive thank you to the Modular team for partnering with @llama_index on this, and to every single person who showed up.

To the 500+ on the waitlist - next time we're getting a bigger venue.

출처: https://nitter.net/jerryjliu0/status/2034043422622028158#m

관련 노트

- [[260317_x]] — 키워드 유사

- [[260319_x]] — 키워드 유사

- [[260313_x]] — 키워드 유사

- [[260315_hn]] — 키워드 유사

- [[260314_x]] — 키워드 유사

- [[260319_tg]] — 키워드 유사

- [[260315_tg]] — 키워드 유사

- [[260316_x]] — 키워드 유사

- [[260310_tg]] — 키워드 유사

- [[260315_reddit]] — 키워드 유사

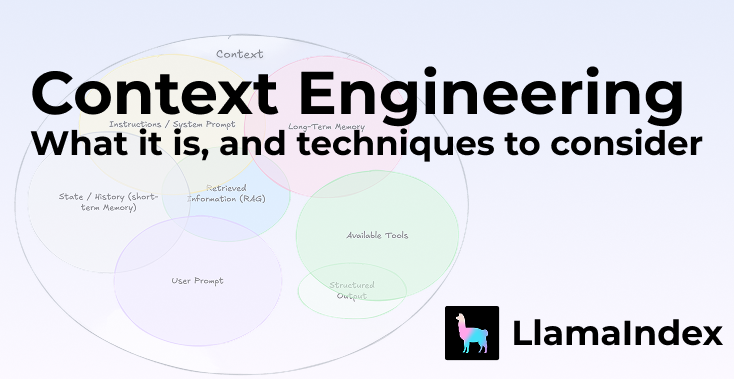

RT by @jerryjliu0: Context engineering is the new prompt engineering — and if yo

RT by @jerryjliu0: Context engineering is the new prompt engineering — and if yo

Context engineering is the new prompt engineering — and if you're building AI agents, you need to understand the difference and why parsing your data correctly sits at the heart of it

Andrej Karpathy put it well: context engineering is "the delicate art and science of filling the context window with just the right information for the next step."

It's not just about the instructions you give an LLM. It's about what you put IN front of it.

That context can come from a lot of places:

— System prompts

— Chat history & long-term memory

— Knowledge base retrieval

— Tool definitions & responses

— Structured outputs

One of the most underrated levers? Structured information.

This is exactly what LlamaParse + LlamaExtract are built for.

Parse your complex documents properly → extract structured, relevant fields → pass clean, dense context to your agent.

Better parsing = better context = better agents. It really is that simple.

Take a look back on a piece by @tuanacelik and @LoganMarkewich about the full breakdown: what context engineering is, what makes up context, and the key techniques to consider — from memory blocks to workflow engineering.

Read it here 👇

llamaindex.ai/blog/context-e…

출처: https://nitter.net/llama_index/status/2034347384973762694#m

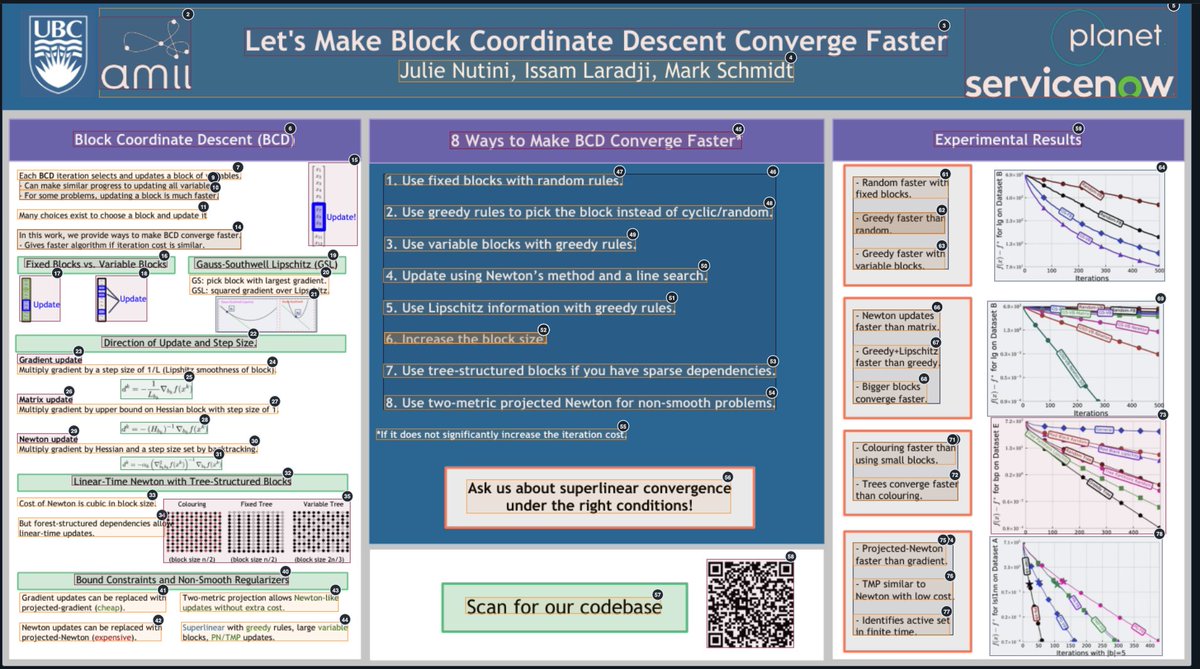

We've massively improved our document layout capabilities in LlamaParse 📄📐

This

We've massively improved our document layout capabilities in LlamaParse 📄📐

This

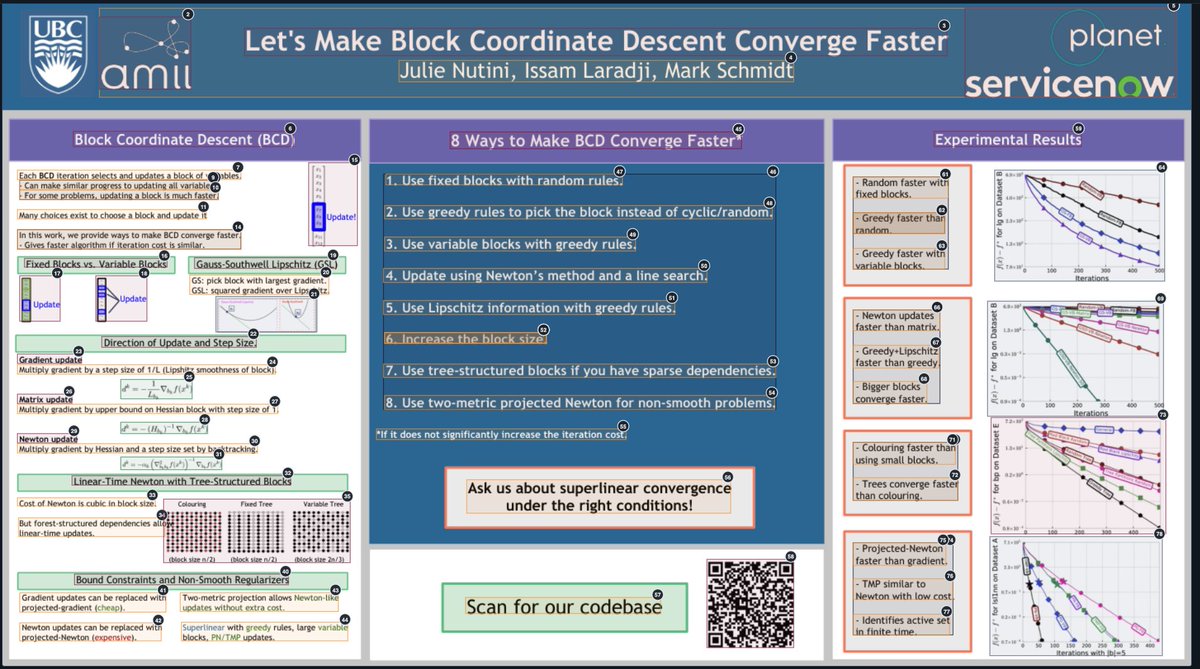

We've massively improved our document layout capabilities in LlamaParse 📄📐

This means that our document OCR engine lets you get insanely detailed bounding boxes over really complex multimodal documents, like the research poster shown below.

A core requirement for any agentic document workflow is enabling users to trace back to the source. Now your AI agents can reason over complex line charts and tables deeply embedded within specific pages, free of hallucinations, but also surface the source segment to the user.

Come check out LlamaParse: cloud.llamaindex.ai/?utm_sou…

If you are building document OCR in production, come talk to us: llamaindex.ai/contact?utm_so…

LlamaIndex 🦙 (@llama_index)

LlamaParse Agentic Plus mode now delivers precise visual grounding with bounding boxes for the most challenging document elements.

Our latest update brings major improvements to how we handle complex visual content:

📐 Complex LaTex formulas - accurately parse mathematical expressions with precise positioning

✍️ Handwriting recognition - extract handwritten text with location coordinates

📊 Complex layouts - navigate multi-column documents and intricate formatting

📈 Infographics and charts - identify and extract data visualizations with spatial context

This means you can now build applications that not only extract text from documents but also understand exactly where that content appears on the page - perfect for creating more intelligent document analysis workflows.

Try LlamaParse Agentic Plus mode and see how visual grounding transforms your document parsing capabilities: cloud.llamaindex.ai?utm_sour…

출처: https://nitter.net/jerryjliu0/status/2034342882795225472#m

RT by @jerryjliu0: LlamaParse Agentic Plus mode now delivers precise visual grou

RT by @jerryjliu0: LlamaParse Agentic Plus mode now delivers precise visual grou

LlamaParse Agentic Plus mode now delivers precise visual grounding with bounding boxes for the most challenging document elements.

Our latest update brings major improvements to how we handle complex visual content:

📐 Complex LaTex formulas - accurately parse mathematical expressions with precise positioning

✍️ Handwriting recognition - extract handwritten text with location coordinates

📊 Complex layouts - navigate multi-column documents and intricate formatting

📈 Infographics and charts - identify and extract data visualizations with spatial context

This means you can now build applications that not only extract text from documents but also understand exactly where that content appears on the page - perfect for creating more intelligent document analysis workflows.

Try LlamaParse Agentic Plus mode and see how visual grounding transforms your document parsing capabilities: cloud.llamaindex.ai?utm_sour…

출처: https://nitter.net/llama_index/status/2034300076441633276#m

R to @AnthropicAI: Hopes clustered around a few basic desires, but concerns abou

R to @AnthropicAI: Hopes clustered around a few basic desires, but concerns abou

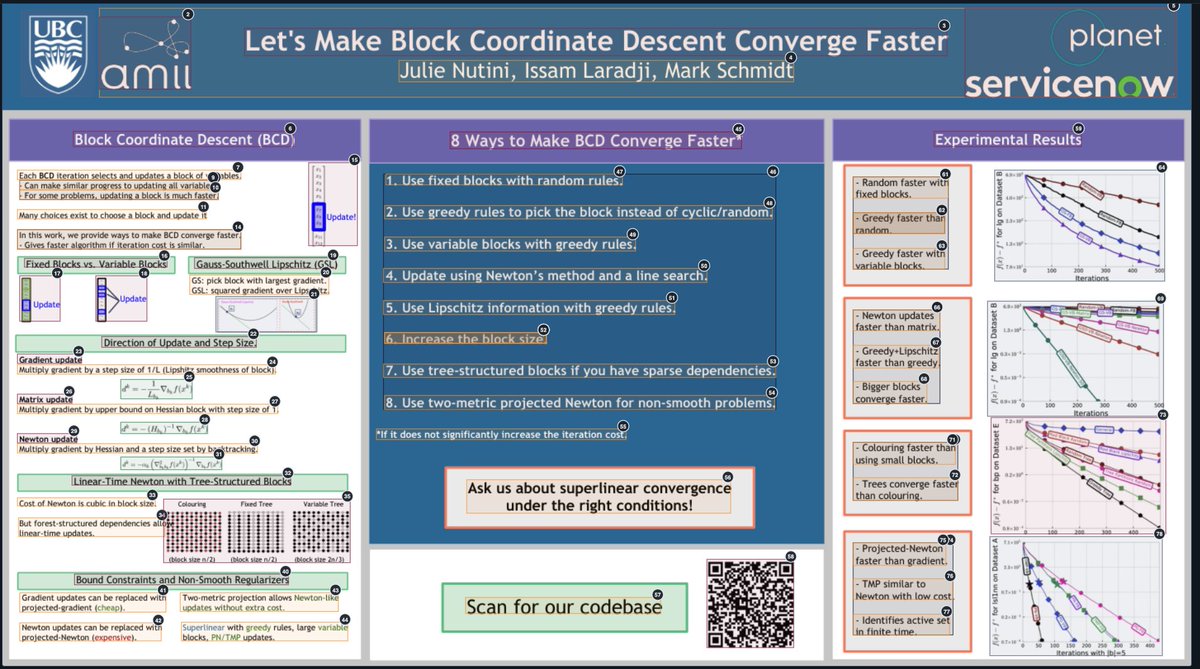

Hopes clustered around a few basic desires, but concerns about AI were more varied. Most common were AI unreliability, jobs and the economy, and maintaining human autonomy and agency.

Notably, economic concern was the strongest predictor of overall AI sentiment.

출처: https://nitter.net/AnthropicAI/status/2034302161514082462#m

R to @AnthropicAI: What do people most want from AI?

Roughly one third want AI

R to @AnthropicAI: What do people most want from AI?

Roughly one third want AI

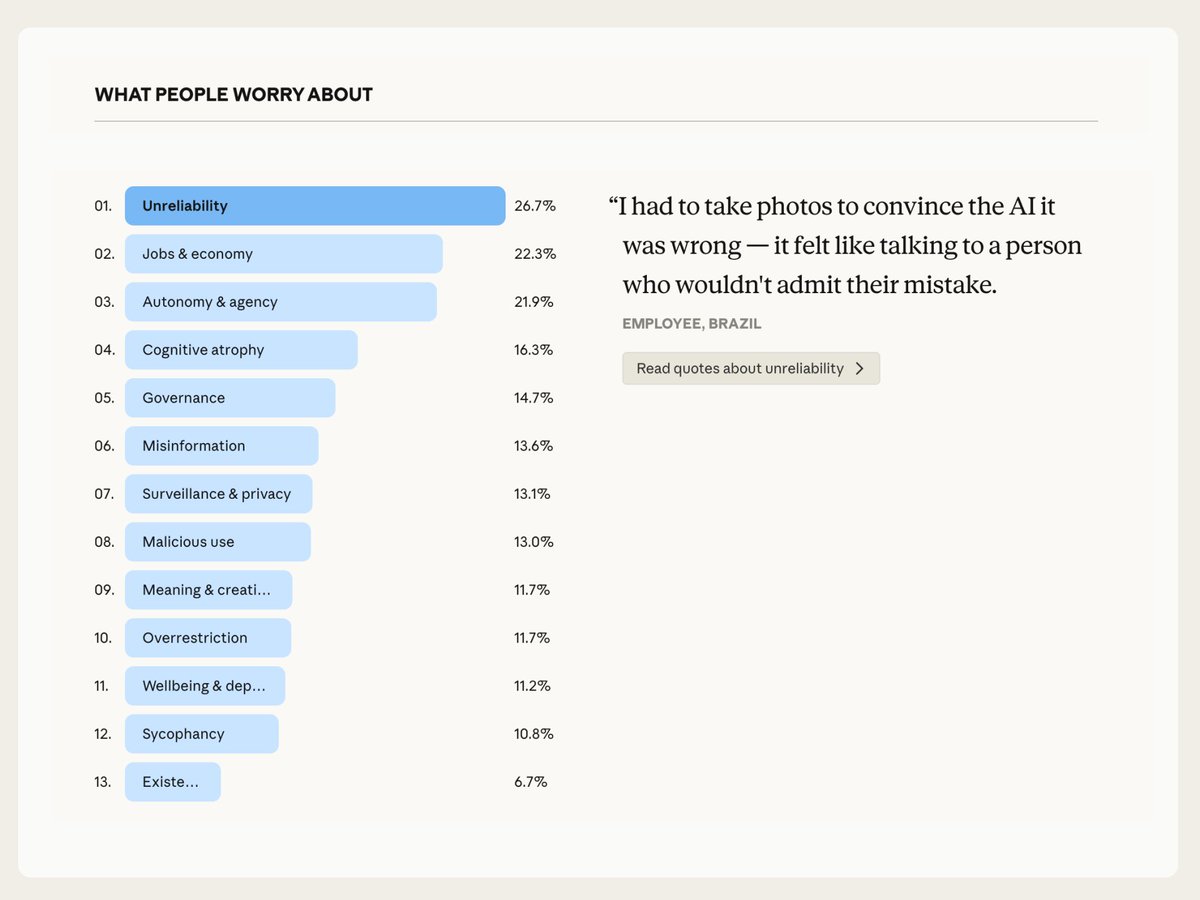

What do people most want from AI?

Roughly one third want AI to improve their quality of life—to find more time, achieve financial security, or carve out mental bandwidth. Another quarter want AI to help them do better and more fulfilling work.

출처: https://nitter.net/AnthropicAI/status/2034302157307220229#m