260319 X(트위터) 모음

RT by @hwchase17: This is very exciting.

It’s an open source version of Ramp’s

RT by @hwchase17: This is very exciting.

It’s an open source version of Ramp’s

This is very exciting.

It’s an open source version of Ramp’s Inspect.

Will be digging into this - could be a much more powerful replacement for Symphony

Harrison Chase (@hwchase17)

a lot of engineering orgs (Stripe, Ramp, Coinbase) are building internal cloud coding agents

we're releasing a fully OSS one today - every company should have the power of cloud agents at their fingertips

출처: https://nitter.net/ryancarson/status/2034188569258958878#m

RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212

I have been

RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212

I have been

Fantastic write-up on SKILLS by the GOAT @trq212

I have been leaning into SKILLS a ton too, both for my Claude Code setup, and also for building agentic software (mostly with DeepAgents from LangChain).

You should read his post top to bottom, and if you don't have much time, skip my comments and just jump straight into it. Here's my stream of conciousness on them. Note that I use them mostly to orchestrate agentic software and to do compound engineering.

- I use them a lot for things like code review, session logging, auditing my documentation, distilling information from other sources (e.g. adjacent repos), and updating my stored context automatically

- I rely *heavily* on progressive disclosure. LLMs like a 'map of the territory' and will happily navigate your files. Use this to your advantage within skills. Any single MD file longer than 100 lines is a smell to me.

- Use skills to clean up and improve your skills. For example, my /update-docs skill makes sure that other skills are up to date with the current info in the repo, and actually do progressive disclosure

- Using hooks to run skills, and relying on CI/CD to run skills, can make the feebdack loop / management of your context much better.

- You can distill skills from your claude context directly. Claude Code stores all of its sessions and plans. You can have it introspect itself to help you come up with good skills to build.

- Understand the front-matter because it is important (e.g what tools a skill can use, how it gets executed - e.g. fork)

- Skills themselves should use progressive disclosure internally. Map of the territory all the way down.

- Bake feedback loops into your skills. Most of my skills have some aspect of generating distilled context that my agents can use later (e.g. adding to a WORKLOG.md, or storing a distilled version of a session in a session_{xyz}.md)

- Don't over-prescribe stuff. My early SKILLS were *way* too long, and now models are good at just figuring stuff out. Let them take the wheel a bit more

- If you are using LLMs as part of a product (e.g. not just Claude Code, but you use something like Claude Agents SDK or DeepAgents) - write your traces to the file system, and build SKILLs that inspect those traces - this is my primary way to make my context better. Basically an automated version of hill-climbing

- If you ever find yourself trying to bootstrap a thing yet again, figure out how you can turn that into a SKILL

I know this was super disorganized and a bit rambling but trying to timebox this to 5 minutes because I have other stuff to do. Probably missed a lot and also still have lots to learn. Either way, do skills stuff. It works great.

Thariq (@trq212)

출처: https://nitter.net/mstockton/status/2034095691648098606#m

RT by @hwchase17: build agents with LangSmith and let them execute code securely

RT by @hwchase17: build agents with LangSmith and let them execute code securely

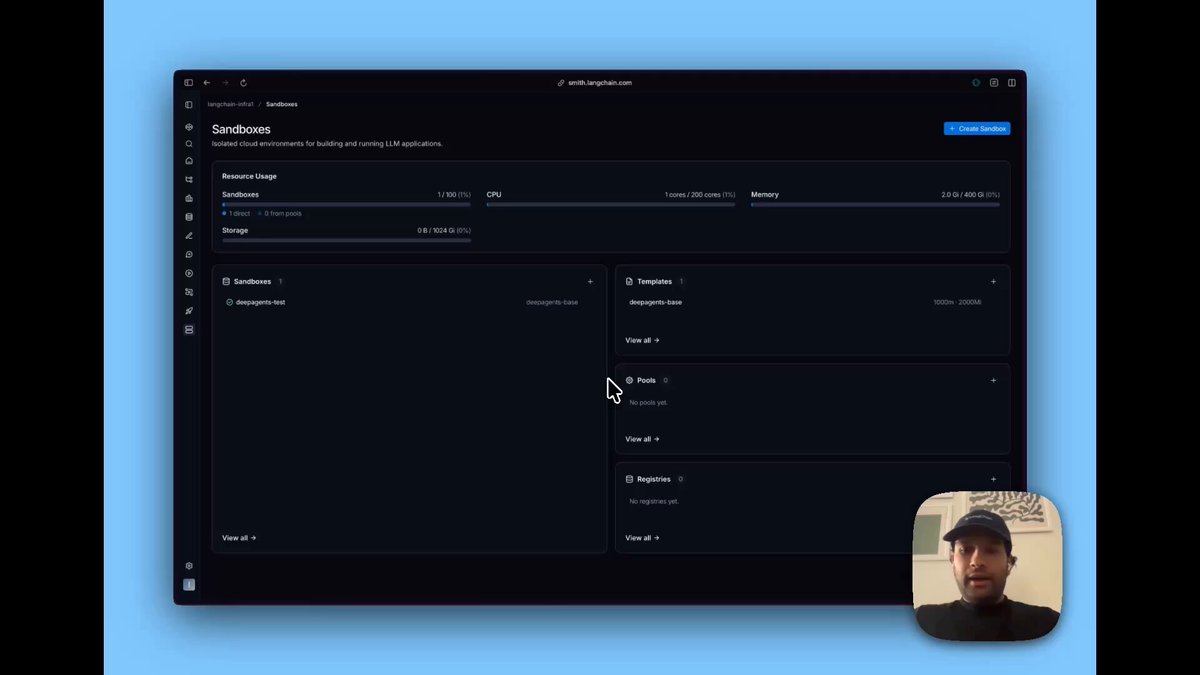

build agents with LangSmith and let them execute code securely. We’re launching LangSmith Sandboxes today!

LangChain (@LangChain)

🚀 Today we're launching LangSmith Sandboxes

Agents get a lot more useful when they can run code: analyze data, call APIs, build entire applications.

Sandboxes give them a safe place to do it with ephemeral, locked-down environments you control.

Now in Private Preview.

Learn more: blog.langchain.com/introduci…

Join the waitlist: langchain.com/langsmith-sand…

Video

출처: https://nitter.net/samecrowder/status/2034123616720421210#m

RT by @hwchase17: The best way to earn a PhD in Agent Engineering/Harness fundam

RT by @hwchase17: The best way to earn a PhD in Agent Engineering/Harness fundam

The best way to earn a PhD in Agent Engineering/Harness fundamentals is to just point your LLM to the Open Source releases by @LangChain and start asking questions on the code base. Amazing work @hwchase17 and @Vtrivedy10

출처: https://nitter.net/abhi__katiyar/status/2034040324268654842#m

RT by @hwchase17: This is huge!

LangChain is launching LangSmith Sandboxes, wh

RT by @hwchase17: This is huge!

LangChain is launching LangSmith Sandboxes, wh

This is huge!

LangChain is launching LangSmith Sandboxes, which makes easy to write and execute code in agents. @LangChain

Now in private preview. Find the details 👇

출처: https://nitter.net/itsafiz/status/2033976665299456170#m

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangCh

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangCh

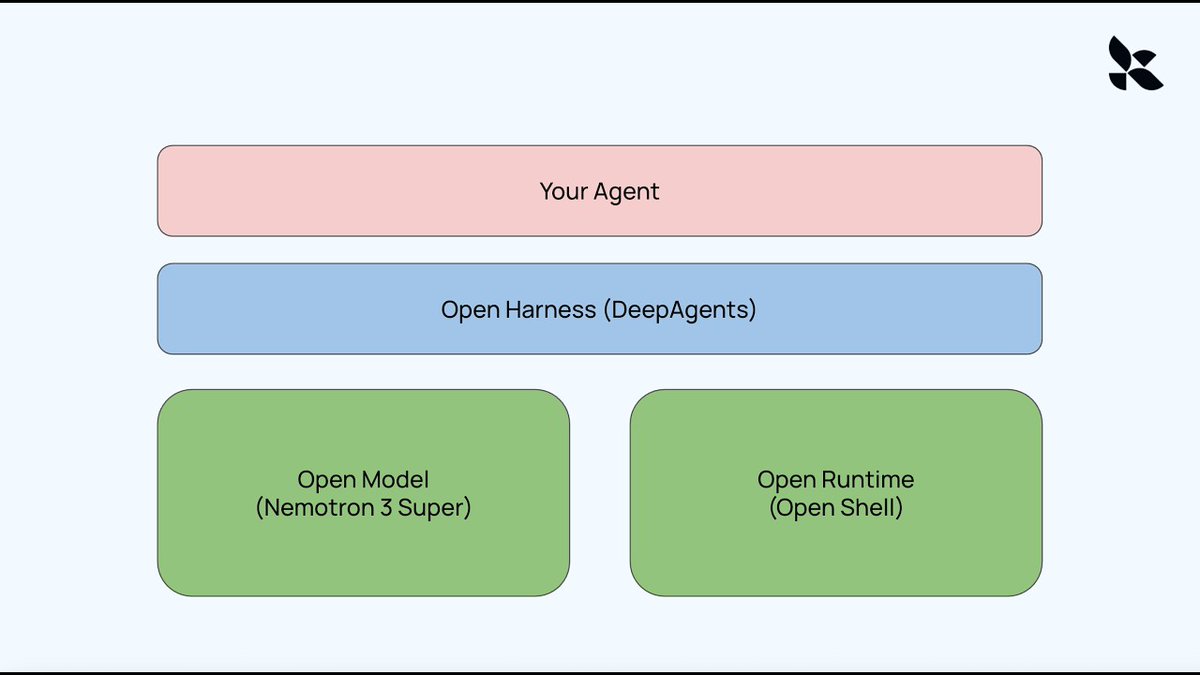

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist of a model, a runtime (environment), and a harness. In this video, we show how to create a completely open version of this:

Open Models: Nemotron 3 Super

Open Runtime: Nvidia's new OpenShell

Open Harness: DeepAgents

Video: piped.video/BEYEWw1Mkmw

Links:

OpenShell DeepAgent: github.com/langchain-ai/open…

Deep Agents: github.com/langchain-ai/deep…

OpenShell: github.com/NVIDIA/OpenShell

출처: https://github.com/langchain-ai/openshell-deepagent

RT by @ylecun: Just to get it straight. Donald Trump has:

- threatened Canada (

RT by @ylecun: Just to get it straight. Donald Trump has:

- threatened Canada (

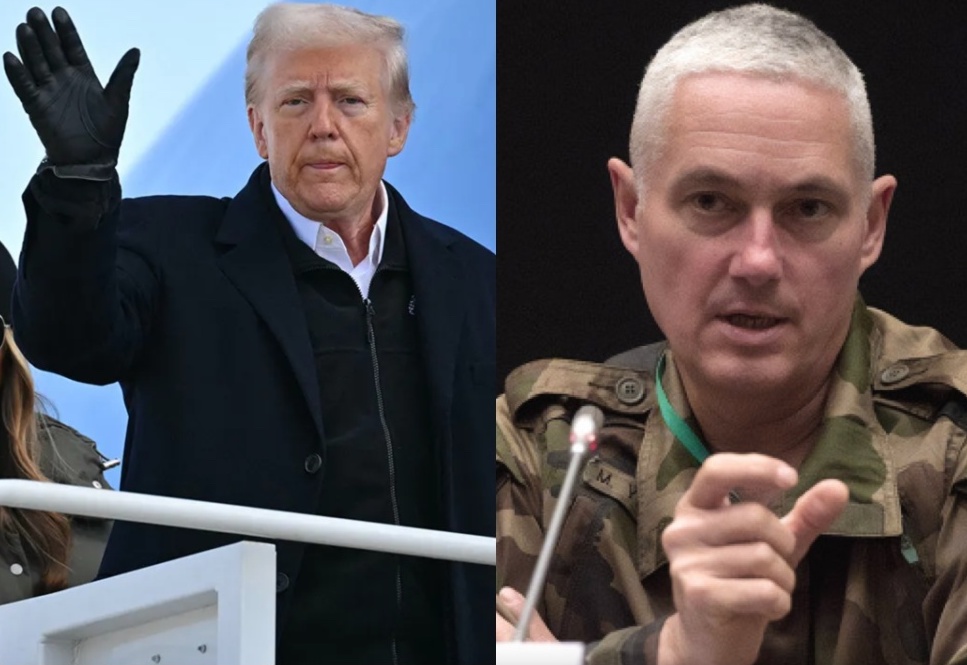

Just to get it straight. Donald Trump has:

- threatened Canada (a NATO member) with invasion

- threatened to occupy Greenland (part of Denmark, a NATO member)

- announced several times that he wants to leave NATO

- refused to support Ukraine, even though the United States gave security assurances to Ukraine in the Budapester Memorandum of 1994

- actively finances Putin‘s war against Ukraine by easing sanctions against Russia

- denied that soldiers from European countries have ever supported the United States and thereby

- spitting on the legacy of fallen European soldiers who supported U.S. operations

- imposed witless tariffs on its allies

- insulted the leaders of allied countries over the past months

And now he wonders why not everyone comes running when he calls.

The situation in the Strait of Hormuz is a mess for the whole world. But Donald Trump himself is responsible for this geopolitical chaos.

출처: https://nitter.net/anna_p_neumann/status/2033855833335828573#m

RT by @ylecun: BREAKING: French General Michel Yakovleff HUMILIATES Trump for be

RT by @ylecun: BREAKING: French General Michel Yakovleff HUMILIATES Trump for be

BREAKING: French General Michel Yakovleff HUMILIATES Trump for begging Europe to get involved in his Iran War, says that it would be like "buying cheap tickets for the Titanic" after it hit the iceberg.

This is beyond brutal...

"We have five reasons to say no to him, in fact," said Yakovleff. "So, the first one is that he didn't understand that if he wants to carry out a NATO operation, NATO has to take command. So, there will be an American general, but it's a single operation."

“You can’t have an American operation where they’re bombing whatever they can and then below that, the Europeans doing something else,” Yakovleff said. “No, no, no, it has to be one sole operation, under a NATO flag. I don’t think he understood that.”

Yakovleff served as a three general in the French Army, was commander of the French Foreign Legion, and served in top positions in NATO. He's a highly respected military expert in France and regularly weighs in on issues of international importance.

Trump has been pleading with allied nations to get involved in his Iran fiasco. Iranian missiles and drones have made it impossible for oil tankers to obtain insurance to traverse the Strait of Hormuz, through which 20% of the world's petroleum normally passes. Oil prices are skyrocketing. So far, Japan, Australia, the United Kingdom, and the European Union have refused Trump's request.

General Yakovleff went on to point out that Trump's strategic goals, beyond forcing open the strait, are vague and undefined. If NATO nations were even going to consider involvement, they would need the United States to explain explicitly in writing what the goals are.

"And it's not tweets, and it's not things that change every two minutes. So, already there, it's going to be necessary for Trump himself to know what he wants," said the general.

He said that there's also the issue of the lack of "confidence" in Trump. It's well-known that he regularly abandons his allies and he could do so here immediately after other nations got involved.

“He would let us down whenever it suited him," said Yakovleff.

He ended his tirade by comparing Trump to the captain of the Titanic trying to "sell cheap tickets" for his voyage "after having hit the iceberg."

“And the last argument is American: you don’t reinforce failure. I learnt that at the U.S. Army War College. You don’t reinforce failure, you move on, you find something else.” he added. "So, there are a lot of reasons to say no."

Please ❤️ and share if you think that the Iran War is a total disaster!

출처: https://nitter.net/OccupyDemocrats/status/2033932563665047859#m

We're updating our Responsible Scaling Policy to its third version.

Since it ca

We're updating our Responsible Scaling Policy to its third version.

Since it ca

We're updating our Responsible Scaling Policy to its third version.

Since it came into effect in 2023, we’ve learned a lot about the RSP’s benefits and its shortcomings. This update improves the policy, reinforcing what worked and committing us to even greater transparency.

출처: https://nitter.net/AnthropicAI/status/2026393790500540566#m

관련 노트

- [[260318_x]] — 키워드 유사

- [[260317_x]] — 키워드 유사

- [[260313_x]] — 키워드 유사

- [[260310_x]] — 키워드 유사

- [[260319_tg]] — 키워드 유사

- [[260314_x]] — 키워드 유사

New course: Agent Memory: Building Memory-Aware Agents, built in partnership wit

New course: Agent Memory: Building Memory-Aware Agents, built in partnership wit

New course: Agent Memory: Building Memory-Aware Agents, built in partnership with @Oracle and taught by @richmondalake and Nacho Martínez.

Many agents work well within a single session but their memory resets once the session ends. Consider a research agent working on dozens of papers across multiple days: without memory, it has no way to store and retrieve what it learned across sessions. This short course teaches you to build a memory system that enables agents to persist memory and thereby learn across sessions.

You'll design a Memory Manager that handles different memory types, implement semantic tool retrieval that scales without bloating the context, and build write-back pipelines that let your agent autonomously update and refine what it knows over time.

Skills you'll gain:

- Build persistent memory stores for different agent memory types

- Implement a Memory Manager that orchestrates how your agent reads, writes, and retrieves memory

- Treat tools as procedural memory and retrieve only relevant ones at inference time using semantic search

Join and learn to build agents that remember and improve over time!

deeplearning.ai/short-course…

출처: https://nitter.net/AndrewYNg/status/2034314027678192114#m

RT by @hwchase17: langchain has had a model profile attribute since ~november! c

RT by @hwchase17: langchain has had a model profile attribute since ~november! c

langchain has had a model profile attribute since ~november! cool to see this pattern getting attention

every chat model auto-loads capabilities at init: context window, tool calling, structured output, modalities, reasoning.

works across Anthropic, OpenAI, Google, Bedrock, etc. thanks to data powered by the open-source models.dev project.

we use the data for auto-configuring agents, summarization middleware, input gating, and more.

more in thread 🧵

Lance Martin (@RLanceMartin)

a useful trick: Claude API now programmatically lists capabilities for every model (context window, thinking mode, context management support, etc). just ask Claude Code or use the API directly.

platform.claude.com/docs/en/…

Video

출처: https://nitter.net/masondrxy/status/2034357640537468971#m

Harness engineering is the future

Harness engineering is the future

Harness engineering is the future

brandon in (@brandonin)

One thing that @hwchase17 touched on is that we are moving to harness engineering.

This is exactly what I’ve been building for 6 months at @shiprail_ai.

We’re making agents smarter. Let’s work together.

출처: https://nitter.net/hwchase17/status/2034370301585490220#m

RT by @hwchase17: One thing that @hwchase17 touched on is that we are moving to

RT by @hwchase17: One thing that @hwchase17 touched on is that we are moving to

One thing that @hwchase17 touched on is that we are moving to harness engineering.

This is exactly what I’ve been building for 6 months at @shiprail_ai.

We’re making agents smarter. Let’s work together.

출처: https://nitter.net/brandonin/status/2034361457291493816#m

Agreed! Good thing we’ve got you covered with langsmith

Agreed! Good thing we’ve got you covered with langsmith

Agreed! Good thing we’ve got you covered with langsmith

AvaSynth (@li_xinrui)

The real bottleneck isn't the architecture—it's the evaluation harness. Most teams nail the model + runtime combo but fail at measuring whether their agent actually solves the intended problem vs just completing tasks that look right.

출처: https://nitter.net/hwchase17/status/2034345897052832178#m

RT by @hwchase17: New Conceptual Guide: You don’t know what your agent will do u

RT by @hwchase17: New Conceptual Guide: You don’t know what your agent will do u

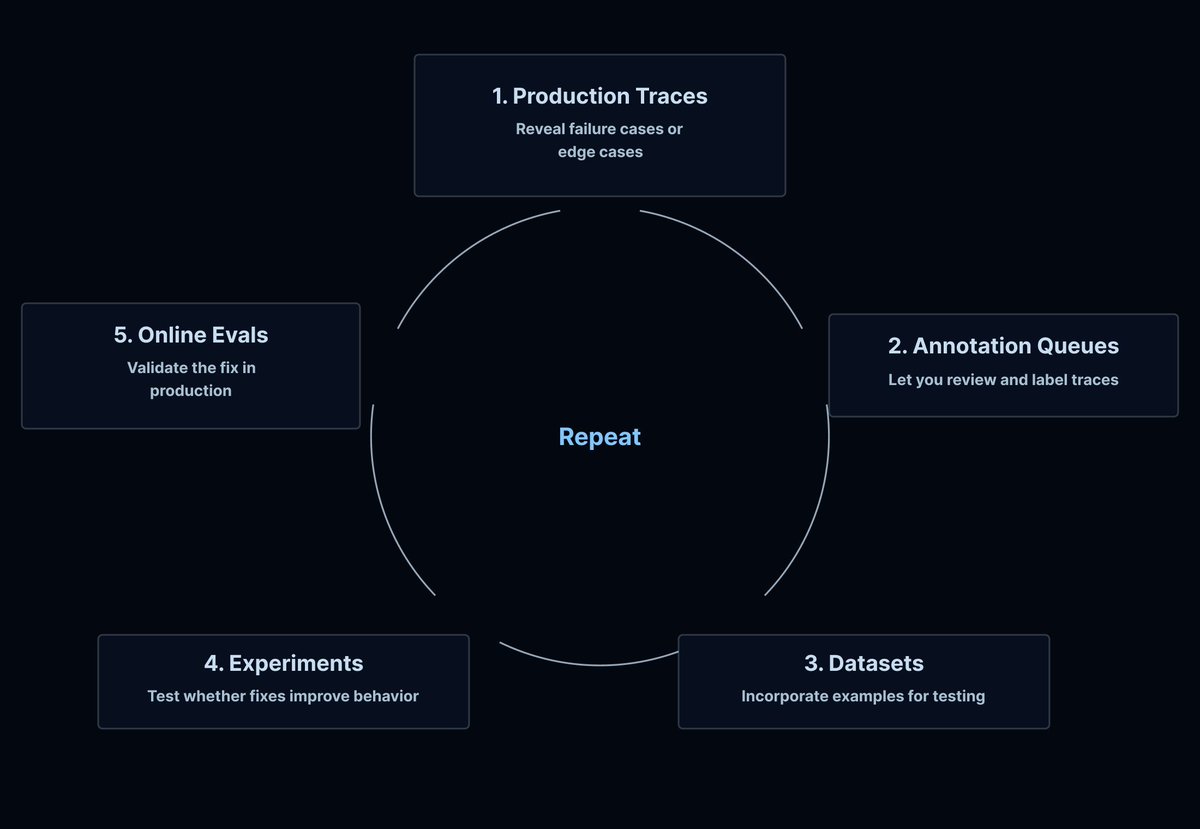

New Conceptual Guide: You don’t know what your agent will do until it’s in production 👀

With traditional software, you ship with reasonable confidence. Test coverage handles most paths. Monitoring catches errors, latency, and query issues. When something breaks, you read the stack trace.

Agents are different. Natural language input is unbounded. LLMs are sensitive to subtle prompt variations. Multi-step reasoning chains are hard to anticipate in dev.

Production monitoring for agents needs a different playbook. In our latest conceptual guide, we cover why agent observability is a different problem, what to actually monitor, and what we've learned from teams deploying agents at scale.

Read the guide ➡️ blog.langchain.com/you-dont-…

출처: https://nitter.net/LangChain/status/2034314483259031965#m

RT by @hwchase17: Polly is our AI assistant built directly into LangSmith to hel

RT by @hwchase17: Polly is our AI assistant built directly into LangSmith to hel

Polly is our AI assistant built directly into LangSmith to help you debug, analyze, and improve your agents — now generally available.

Now, Polly lives on every page of LangSmith, remembers your full session as you navigate, and can take action to update prompts, compare experiments, write evaluators, and more.

Read the blog: blog.langchain.com/polly-lan…

See the docs: docs.langchain.com/langsmith…

Try Polly in LangSmith: smith.langchain.com/

출처: https://nitter.net/LangChain/status/2034321435418825023#m

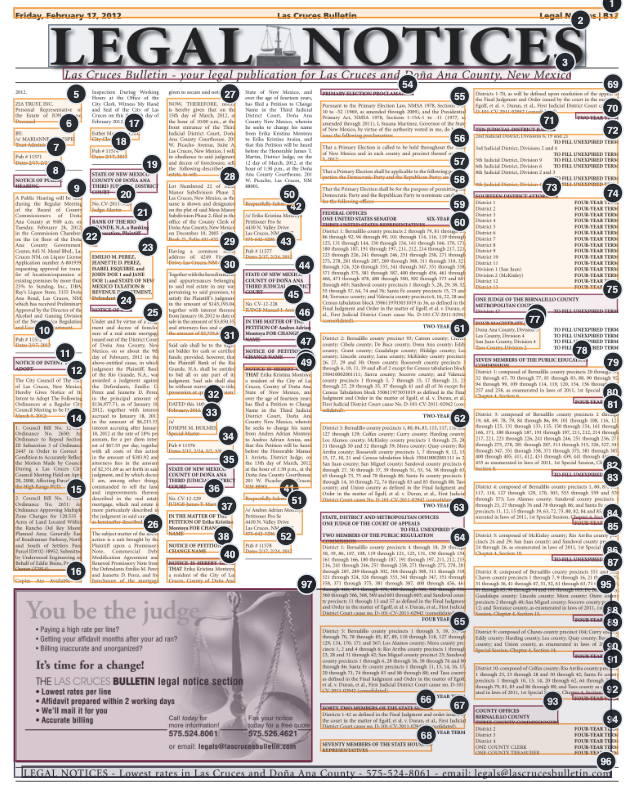

One of the biggest requirements for document OCR is visual grounding, and fronti

One of the biggest requirements for document OCR is visual grounding, and fronti

One of the biggest requirements for document OCR is visual grounding, and frontier models (gemini, opus, gpt-5.4) suck at it by default.

In other words they don't have a great sense of the positions of things on a page.

We've made massive strides in making sure our models are able to segment and detect every granular element in the most complex docs. This allows you to build AI agents that can surface extremely precise citations in the source documents:

✅ newspapers

✅ infographics

✅ handwritten notes

✅ product catalogs

✅ research presentations

and much more

Come check it out in LlamaParse!

cloud.llamaindex.ai/?utm_sou…

LlamaIndex 🦙 (@llama_index)

LlamaParse Agentic Plus mode now delivers precise visual grounding with bounding boxes for the most challenging document elements.

Our latest update brings major improvements to how we handle complex visual content:

📐 Complex LaTex formulas - accurately parse mathematical expressions with precise positioning

✍️ Handwriting recognition - extract handwritten text with location coordinates

📊 Complex layouts - navigate multi-column documents and intricate formatting

📈 Infographics and charts - identify and extract data visualizations with spatial context

This means you can now build applications that not only extract text from documents but also understand exactly where that content appears on the page - perfect for creating more intelligent document analysis workflows.

Try LlamaParse Agentic Plus mode and see how visual grounding transforms your document parsing capabilities: cloud.llamaindex.ai?utm_sour…

출처: https://nitter.net/jerryjliu0/status/2034440027661566175#m

RT by @ylecun: One Gulf diplomat, who has direct knowledge of the Iran nuclear t

RT by @ylecun: One Gulf diplomat, who has direct knowledge of the Iran nuclear t

One Gulf diplomat, who has direct knowledge of the Iran nuclear talks, decribes Trump envoys Witkoff and Kushner as “Israeli assets that had conspired to force the US president into entering a war from which he is now desperate to get himself out of.” trib.al/zQZwC1J

출처: https://nitter.net/KenRoth/status/2034251541385789574#m