260319 X(트위터) 모음

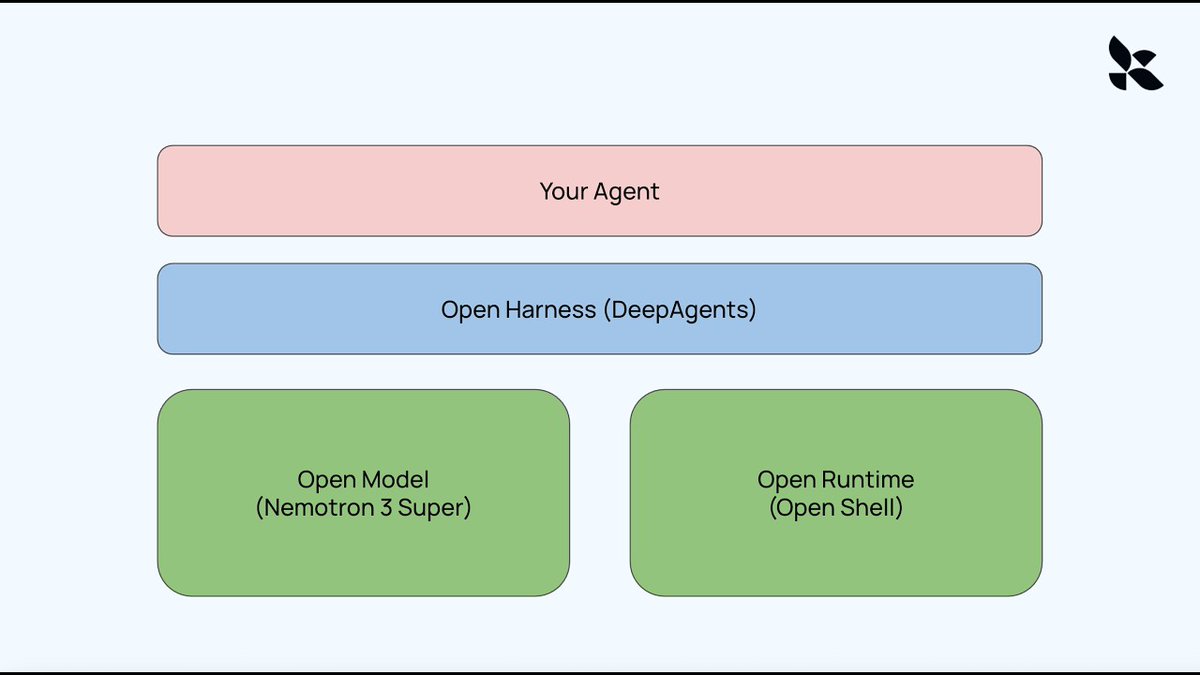

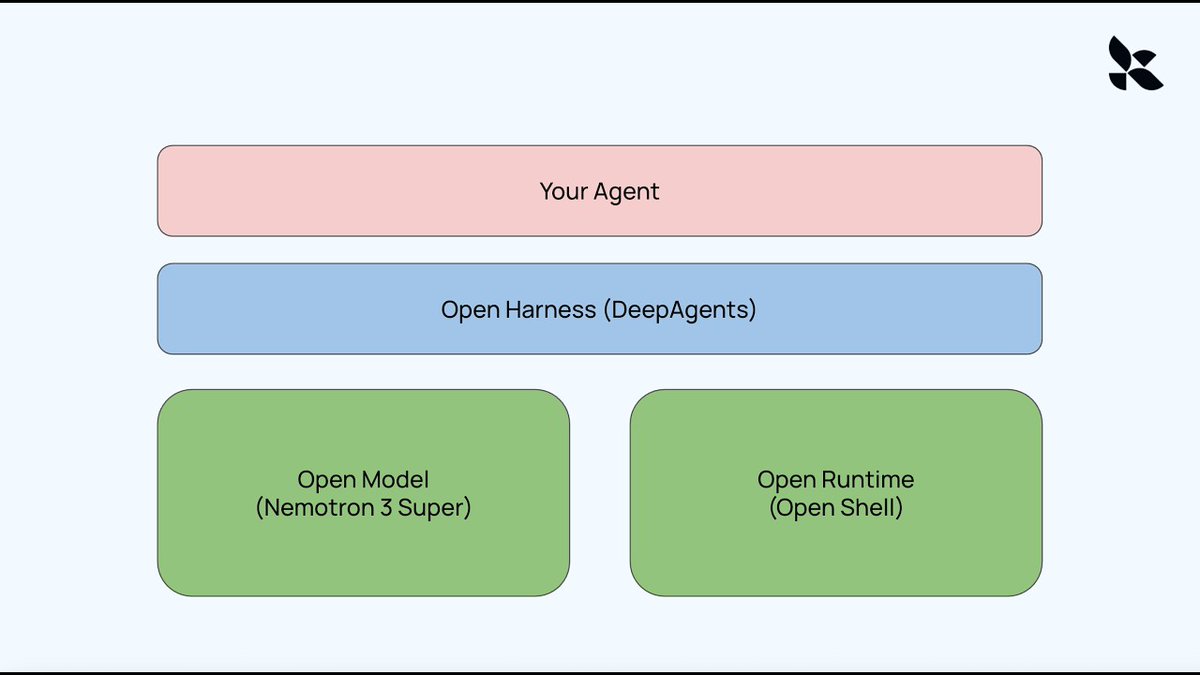

[10/10] Open Models, Open Runtime, Open Harness - Building your own AI agent with LangCh

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist of a model, a runtime (environment), and a harness. In this video, we show how to create a completely open version of this:

Open Models: Nemotron 3 Super

Open Runtime: Nvidia's new OpenShell

Open Harness: DeepAgents

Video: piped.video/BEYEWw1Mkmw

Links:

OpenShell DeepAgent: github.com/langchain-ai/open…

Deep Agents: github.com/langchain-ai/deep…

OpenShell: github.com/NVIDIA/OpenShell

agent_orchestration claude code ron agent openclaw

agent_orchestration claude code ron agent openclaw

출처: https://piped.video/BEYEWw1Mkmw">piped.video/BEYEWw1Mkmw<br

점수: 10/10 — 점수 10/10: claude code, openclaw, claude

[9/10] RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212 I have been

RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212

I have been leaning into SKILLS a ton too, both for my Claude Code setup, and also for building agentic software (mostly with DeepAg

Fantastic write-up on SKILLS by the GOAT @trq212

I have been leaning into SKILLS a ton too, both for my Claude Code setup, and also for building agentic software (mostly with DeepAgents from LangChain).

You should read his post top to bottom, and if you don't have much time, skip my comments and just jump straight into it. Here's my stream of conciousness on them. Note that I use them mostly to orchestrate agentic software and to do compound engineering.

- I use them a lot for things like code review, session logging, auditing my documentation, distilling information from other sources (e.g. adjacent repos), and updating my stored context automatically

- I rely heavily on progressive disclosure. LLMs like a 'map of the territory' and will happily navigate your files. Use this to your advantage within skills. Any single MD file longer than 100 lines is a smell to me.

- Use skills to clean up and improve your skills. For example, my /update-docs skill makes sure that other skills are up to date with the current info in the repo, and actually do progressive disclosure

- Using hooks to run skills, and relying on CI/CD to run skills, can make the feebdack loop / management of your context much better.

- You can distill skills from your claude context directly. Claude Code stores all of its sessions and plans. You can have it introspect itself to help you come up with good skills to build.

- Understand the front-matter because it is important (e.g what tools a skill can use, how it gets executed - e.g. fork)

- Skills themselves should use progressive disclosure internally. Map of the territory all the way down.

- Bake feedback loops into your skills. Most of my skills have some aspect of generating distilled context that my agents can use later (e.g. adding to a WORKLOG.md, or storing a distilled version of a session in a session_{xyz}.md)

- Don't over-prescribe stuff. My early SKILLS were way too long, and now models are good at just figuring stuff out. Let them take the wheel a bit more

- If you are using LLMs as part of a product (e.g. not just Claude Code, but you use something like Claude Agents SDK or DeepAgents) - write your traces to the file system, and build SKILLs that inspect those traces - this is my primary way to make my context better. Basically an automated version of hill-climbing

- If you ever find yourself trying to bootstrap a thing yet again, figure out how you can turn that into a SKILL

I know this was super disorganized and a bit rambling but trying to timebox this to 5 minutes because I have other stuff to do. Probably missed a lot and also still have lots to learn. Either way, do skills stuff. It works great.

Thariq (@trq212)

agent_orchestration claude code ron llm orches agent

출처: https://nitter.net/trq212"

점수: 9/10 — 점수 9/10: claude code, claude

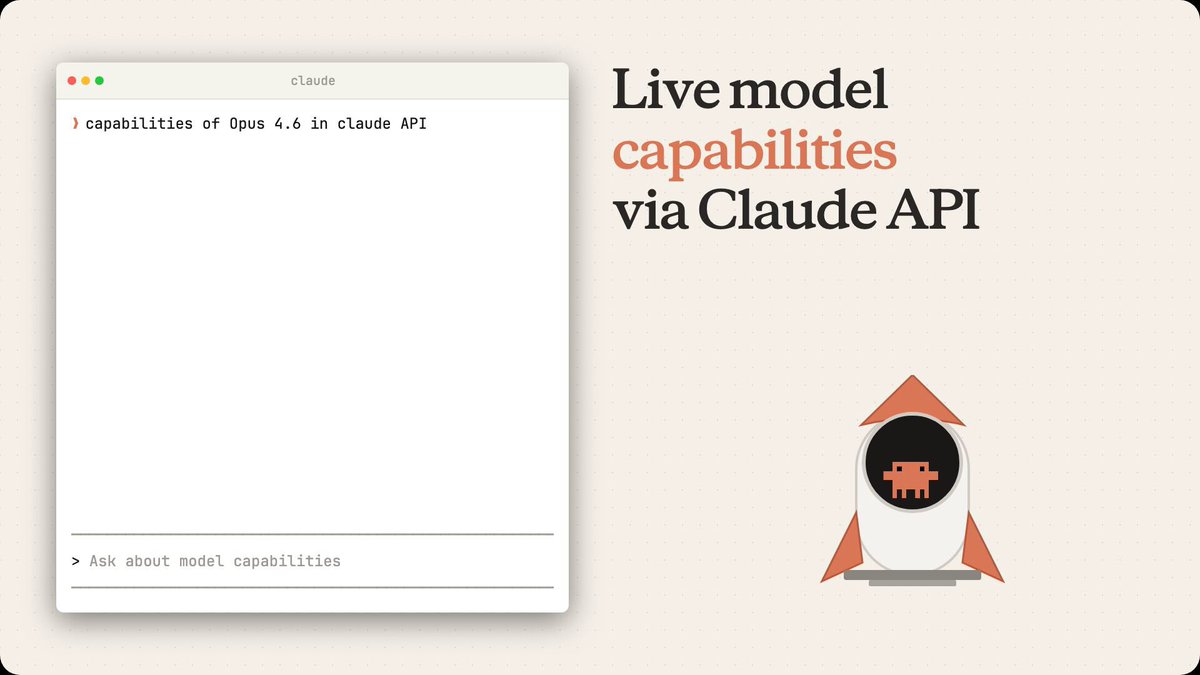

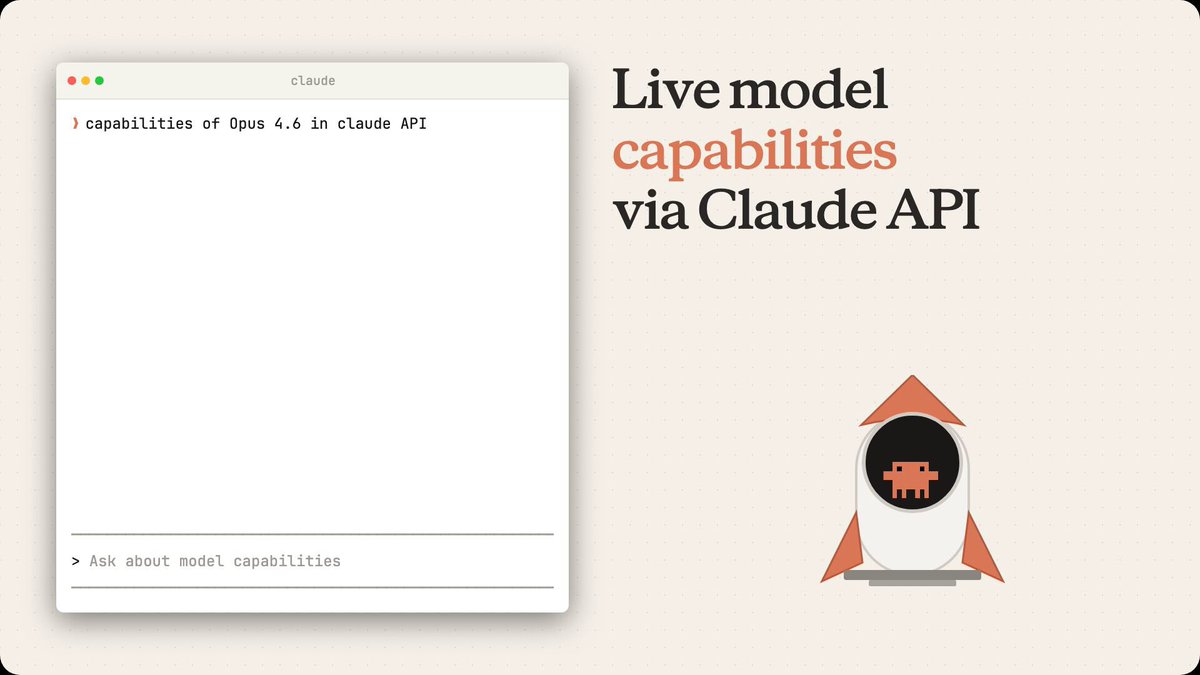

[10/10] RT by @hwchase17: langchain has had a model profile attribute since ~november! c

RT by @hwchase17: langchain has had a model profile attribute since ~november! cool to see this pattern getting attention

every chat model auto-loads capabilities at init: context window, tool callin

langchain has had a model profile attribute since ~november! cool to see this pattern getting attention

every chat model auto-loads capabilities at init: context window, tool calling, structured output, modalities, reasoning.

works across Anthropic, OpenAI, Google, Bedrock, etc. thanks to data powered by the open-source models.dev project.

we use the data for auto-configuring agents, summarization middleware, input gating, and more.

more in thread 🧵

Lance Martin (@RLanceMartin)

a useful trick: Claude API now programmatically lists capabilities for every model (context window, thinking mode, context management support, etc). just ask Claude Code or use the API directly.

platform.claude.com/docs/en/…

Video

agent_orchestration claude api claude code agent

출처: http://models.dev">models.dev

점수: 10/10 — 점수 10/10: claude code, anthropic, claude api, claude

[10/10] Open Models, Open Runtime, Open Harness - Building your own AI agent with LangCh

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist

Open Models, Open Runtime, Open Harness - Building your own AI agent with LangChain and Nvidia

Claude Code, OpenClaw, Manus and other agents all use the same architecture under the hood. They consist of a model, a runtime (environment), and a harness. In this video, we show how to create a completely open version of this:

Open Models: Nemotron 3 Super

Open Runtime: Nvidia's new OpenShell

Open Harness: DeepAgents

Video: piped.video/BEYEWw1Mkmw

Links:

OpenShell DeepAgent: github.com/langchain-ai/open…

Deep Agents: github.com/langchain-ai/deep…

OpenShell: github.com/NVIDIA/OpenShell

agent_orchestration claude code ron agent openclaw

agent_orchestration claude code ron agent openclaw

출처: https://piped.video/BEYEWw1Mkmw">piped.video/BEYEWw1Mkmw<br

점수: 10/10 — 점수 10/10: claude code, openclaw, claude

[10/10] RT by @hwchase17: langchain has had a model profile attribute since ~november! c

RT by @hwchase17: langchain has had a model profile attribute since ~november! cool to see this pattern getting attention

every chat model auto-loads capabilities at init: context window, tool callin

langchain has had a model profile attribute since ~november! cool to see this pattern getting attention

every chat model auto-loads capabilities at init: context window, tool calling, structured output, modalities, reasoning.

works across Anthropic, OpenAI, Google, Bedrock, etc. thanks to data powered by the open-source models.dev project.

we use the data for auto-configuring agents, summarization middleware, input gating, and more.

more in thread 🧵

Lance Martin (@RLanceMartin)

a useful trick: Claude API now programmatically lists capabilities for every model (context window, thinking mode, context management support, etc). just ask Claude Code or use the API directly.

platform.claude.com/docs/en/…

Video

agent_orchestration claude api claude code agent

출처: http://models.dev">models.dev

점수: 10/10 — 점수 10/10: claude code, anthropic, claude api, claude

[10/10] Important new course: Agent Skills with Anthropic, built with @AnthropicAI and t

Important new course: Agent Skills with Anthropic, built with @AnthropicAI and taught by @eschoppik!

Skills are constructed as folders of instructions that equip agents with on-demand knowledge and w

Important new course: Agent Skills with Anthropic, built with @AnthropicAI and taught by @eschoppik!

Skills are constructed as folders of instructions that equip agents with on-demand knowledge and workflows. This short course teaches you how to create them following best practices. Because skills follow an open standard format, you can build them once and deploy across any skills-compatible agent, like Claude Code.

What you'll learn:

- Create custom skills for code generation and review, data analysis, and research

- Build complex workflows using Anthropic's pre-built skills (Excel, PowerPoint, skill creation) and custom skills

- Combine skills with MCP and subagents to create agentic systems with specialized knowledge

- Deploy the same skills across Claude.ai, Claude Code, the Claude API, and the Claude Agent SDK

Join and learn to equip agents with the specialized knowledge they need for reliable, repeatable workflows.

deeplearning.ai/short-course…

Video

agent_orchestration claude api claude code agent

agent_orchestration claude api claude code agent

출처: https://nitter.net/AnthropicAI"

점수: 10/10 — 점수 10/10: mcp, claude code, anthropic, claude api, claude

[9/10] RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212 I have been

RT by @hwchase17: Fantastic write-up on SKILLS by the GOAT @trq212

I have been leaning into SKILLS a ton too, both for my Claude Code setup, and also for building agentic software (mostly with DeepAg

Fantastic write-up on SKILLS by the GOAT @trq212

I have been leaning into SKILLS a ton too, both for my Claude Code setup, and also for building agentic software (mostly with DeepAgents from LangChain).

You should read his post top to bottom, and if you don't have much time, skip my comments and just jump straight into it. Here's my stream of conciousness on them. Note that I use them mostly to orchestrate agentic software and to do compound engineering.

- I use them a lot for things like code review, session logging, auditing my documentation, distilling information from other sources (e.g. adjacent repos), and updating my stored context automatically

- I rely heavily on progressive disclosure. LLMs like a 'map of the territory' and will happily navigate your files. Use this to your advantage within skills. Any single MD file longer than 100 lines is a smell to me.

- Use skills to clean up and improve your skills. For example, my /update-docs skill makes sure that other skills are up to date with the current info in the repo, and actually do progressive disclosure

- Using hooks to run skills, and relying on CI/CD to run skills, can make the feebdack loop / management of your context much better.

- You can distill skills from your claude context directly. Claude Code stores all of its sessions and plans. You can have it introspect itself to help you come up with good skills to build.

- Understand the front-matter because it is important (e.g what tools a skill can use, how it gets executed - e.g. fork)

- Skills themselves should use progressive disclosure internally. Map of the territory all the way down.

- Bake feedback loops into your skills. Most of my skills have some aspect of generating distilled context that my agents can use later (e.g. adding to a WORKLOG.md, or storing a distilled version of a session in a session_{xyz}.md)

- Don't over-prescribe stuff. My early SKILLS were way too long, and now models are good at just figuring stuff out. Let them take the wheel a bit more

- If you are using LLMs as part of a product (e.g. not just Claude Code, but you use something like Claude Agents SDK or DeepAgents) - write your traces to the file system, and build SKILLs that inspect those traces - this is my primary way to make my context better. Basically an automated version of hill-climbing

- If you ever find yourself trying to bootstrap a thing yet again, figure out how you can turn that into a SKILL

I know this was super disorganized and a bit rambling but trying to timebox this to 5 minutes because I have other stuff to do. Probably missed a lot and also still have lots to learn. Either way, do skills stuff. It works great.

Thariq (@trq212)

agent_orchestration claude code ron llm orches agent

출처: https://nitter.net/trq212"

점수: 9/10 — 점수 9/10: claude code, claude

[9/10] Job seekers in the U.S. and many other nations face a tough environment. At the

Job seekers in the U.S. and many other nations face a tough environment. At the same time, fears of AI-caused job loss have — so far — been overblown. However, the demand for AI skills is starting to

Job seekers in the U.S. and many other nations face a tough environment. At the same time, fears of AI-caused job loss have — so far — been overblown. However, the demand for AI skills is starting to cause shifts in the job market. I’d like to share what I’m seeing on the ground.

First, many tech companies have laid off workers over the past year. While some CEOs cited AI as the reason — that AI is doing the work, so people are no longer needed — the reality is AI just doesn’t work that well yet. Many of the layoffs have been corrections for overhiring during the pandemic or general cost-cutting and reorganization that occasionally happened even before modern AI. Outside of a handful of roles, few layoffs have resulted from jobs being automated by AI.

Granted, this may grow in the future. People who are currently in some professions that are highly exposed to AI automation, such as call-center operators, translators, and voice actors, are likely to struggle to find jobs and/or see declining salaries. But widespread job losses have been overhyped.

Instead, a common refrain applies: AI won’t replace workers, but workers who use AI will replace workers who don’t. For instance, because AI coding tools make developers much more efficient, developers who know how to use them are increasingly in-demand. (If you want to be one of these people, please take our short courses on Claude Code, Gemini CLI, and Agentic Skills!)

So AI is leading to job losses, but in a subtle way. Some businesses are letting go of employees who are not adapting to AI and replacing them with people who are. This trend is already obvious in software development. Further, in many startups’ hiring patterns, I am seeing early signs of this type of personnel replacement in roles that traditionally are considered non-technical. Marketers, recruiters, and analysts who know how to code with AI are more productive than those who don’t, so some businesses are slowly parting ways with employees that aren’t able to adapt. I expect this will accelerate.

At the same time, when companies build new teams that are AI native, sometimes the new teams are smaller than the ones they replace. AI makes individuals more effective, and this makes it possible to shrink team sizes. For example, as AI has made building software easier, the bottleneck is shifting to deciding what to build — this is the Product Management (PM) bottleneck. A project that used to be assigned to 8 engineers and 1 PM might now be assigned to 2 engineers and 1 PM, or perhaps even to a single person with a mix of engineering and product skills.

The good news for employees is that most businesses have a lot of work to do and not enough people to do it. People with the right AI skills are often given opportunities to step up and do more, and maybe tackle the long backlog of ideas that couldn’t be executed before AI made the work go more quickly. I’m seeing many employees in many businesses step up to build new things that help their business. Opportunities abound!

I know these changes are stressful. My heart goes out to every family that has been affected by a layoff, to every job seeker struggling to find the role they want, and to the far larger number of people who are worried about their future job prospects. Fortunately, there’s still time to learn and position yourself well for where the job market is going. When it comes to AI, the vast majority of people, technical or nontechnical, are at the starting line, or they were recently. So this remains a great time to keep learning and keep building, and the opportunities for those who do are numerous!

[Original text; deeplearning.ai/the-batch/is… ]

출처: https://www.deeplearning.ai/the-batch/issue-339/">deeplearning.ai/the-batch/is…

점수: 9/10 — 점수 9/10: claude code, claude

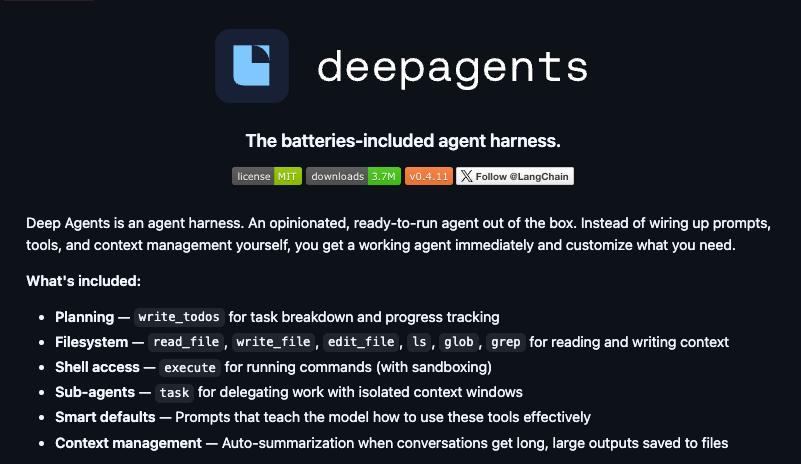

[9/10] RT by @hwchase17: LangChain just open-sourced a replica of Claude Code. It's cal

RT by @hwchase17: LangChain just open-sourced a replica of Claude Code. It's called Deep Agents.

MIT licensed, model-agnostic, and fully inspectable - so you can finally see exactly how coding agents

LangChain just open-sourced a replica of Claude Code. It's called Deep Agents.

MIT licensed, model-agnostic, and fully inspectable - so you can finally see exactly how coding agents like Claude Code are built under the hood.

The black box just became a textbook.

GitHub: github.com/langchain-ai/deep…

agent_orchestration claude code agent

agent_orchestration claude code agent

출처: http://github.com/langchain-ai/deepagents">github.com/langchain-ai/deep…

점수: 9/10 — 점수 9/10: claude code, claude

[8/10] Should there be a Stack Overflow for AI coding agents to share learnings with ea

Should there be a Stack Overflow for AI coding agents to share learnings with each other?

Last week I announced Context Hub (chub), an open CLI tool that gives coding agents up-to-date API documentat

Should there be a Stack Overflow for AI coding agents to share learnings with each other?

Last week I announced Context Hub (chub), an open CLI tool that gives coding agents up-to-date API documentation. Since then, our GitHub repo has gained over 6K stars, and we've scaled from under 100 to over 1000 API documents, thanks to community contributions and a new agentic document writer. Thank you to everyone supporting Context Hub!

OpenClaw and Moltbook showed that agents can use social media built for them to share information. In our new chub release, agents can share feedback on documentation — what worked, what didn't, what's missing. This feedback helps refine the docs for everyone, with safeguards for privacy and security.

We're still early in building this out. You can find details and configuration options in the GitHub repo. Install chub as follows, and prompt your coding agent to use it:

npm install -g @aisuite/chub

GitHub: github.com/andrewyng/context…

출처: https://github.com/andrewyng/context-hub">github.com/andrewyng/context…

점수: 8/10 — 점수 8/10: openclaw

[8/10] New on the Anthropic Engineering Blog: In evaluating Claude Opus 4.6 on BrowseCo

New on the Anthropic Engineering Blog: In evaluating Claude Opus 4.6 on BrowseComp, we found cases where the model recognized the test, then found and decrypted answers to it—raising questions about e

New on the Anthropic Engineering Blog: In evaluating Claude Opus 4.6 on BrowseComp, we found cases where the model recognized the test, then found and decrypted answers to it—raising questions about eval integrity in web-enabled environments.

Read more: anthropic.com/engineering/ev…

출처: https://www.anthropic.com/engineering/eval-awareness-browsecomp">anthropic.com/engineering/ev…

점수: 8/10 — 점수 8/10: anthropic, claude

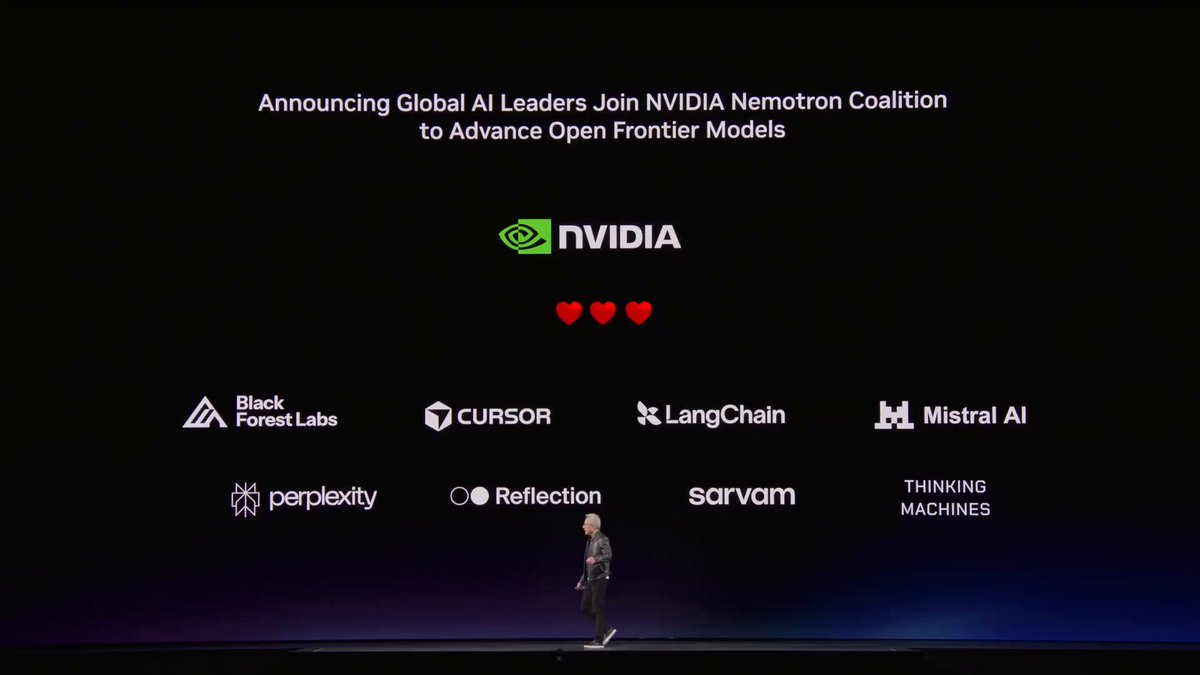

[8/10] RT by @hwchase17: The biggest software bet Jensen made today: NemoClaw. He call

RT by @hwchase17: The biggest software bet Jensen made today: NemoClaw.

He called OpenClaw "the most popular open source project in the history of humanity."

Nvidia just made it enterprise-ready.

O

The biggest software bet Jensen made today: NemoClaw.

He called OpenClaw "the most popular open source project in the history of humanity."

Nvidia just made it enterprise-ready.

One command. Installs the full secure stack. Deploys an AI agent on your own hardware.

Jensen compared NemoClaw to what Windows did for personal computers.

Video

Shruti (@heyshrutimishra)

In collaboration with NVIDIA, I am giving away:

→ $100 BrevCode credits (1 winner)

→ NVIDIA swag T-shirts (multiple winners)

Register for GTC (free): nvda.ws/3ONqdE5

Reply with your registration screenshot + session that you are attending within next 3 days.

All the best! 😁

#NVIDIA #GTC2026 @nvidia @NVIDIAGTC

agent_orchestration agent openclaw

출처: https://nitter.net/heyshrutimishra/status/2033805372104888424#m">

점수: 8/10 — 점수 8/10: openclaw

[7/10] U.S. policies are driving allies away from using American AI technology. This is

U.S. policies are driving allies away from using American AI technology. This is leading to interest in sovereign AI — a nation’s ability to access AI technology without relying on foreign powers. Thi

U.S. policies are driving allies away from using American AI technology. This is leading to interest in sovereign AI — a nation’s ability to access AI technology without relying on foreign powers. This weakens U.S. influence, but might lead to increased competition and support for open source.

The U.S. invented the transistor, the internet, and the transformer architecture powering modern AI. It has long been a technology powerhouse. I love America, and am working hard towards its success. But its actions over many years, taken by multiple administrations, have made other nations worry about over reliance on it.

In 2022, following Russia’s invasion of Ukraine, U.S. sanctions on banks linked to Russian oligarchs resulted in ordinary consumers’ credit cards being shut off. Shortly before leaving office, Biden implemented “AI diffusion” export controls that limited the ability of many nations — including U.S. allies — to buy AI chips.

Under Trump, the “America first” approach has significantly accelerated pushing other nations away. There have been broad and chaotic tariffs imposed on both allies and adversaries. Threats to take over Greenland. An unfriendly attitude toward immigration — an overreaction to the chaos at the southern border during Biden’s administration — including atrocious tactics by ICE (Immigration and Customs Enforcement) that resulted in agents shooting dead Renée Good, Alex Pretti, and others. Global media has widely disseminated videos of ICE terrorizing American cities, and I have highly skilled, law-abiding friends overseas who now hesitate to travel to the U.S., fearing arbitrary detention.

Given AI’s strategic importance, nations want to ensure no foreign power can cut off their access. Hence, sovereign AI.

Sovereign AI is still a vague, rather than precisely defined, concept. Complete independence is impractical: There are no good substitutes to AI chips designed in the U.S. and manufactured in Taiwan, and a lot of energy equipment and computer hardware are manufactured in China. But there is a clear desire to have alternatives to the frontier models from leading U.S. companies OpenAI, Google, and Anthropic. Partly because of this, open-weight Chinese models like DeepSeek, Qwen, Kimi, and GLM are gaining rapid adoption, especially outside the U.S.

When it comes to sovereign AI, fortunately one does not have to build everything. By joining the global open-source community, a nation can secure its own access to AI. The goal isn’t to control everything; rather, it is to make sure no one else can control what you do with it. Indeed, nations use open source software like Linux, Python, and PyTorch. Even though no nation can control this software, no one else can stop anyone from using it as they see fit.

This is spurring nations to invest more in open source and open weight models. The UAE (under the leadership of my former grad-school officemate Eric Xing!) just launched K2 Think, an open-source reasoning model. India, France, South Korea, Switzerland, Saudi Arabia, and others are developing domestic foundation models, and many more countries are working to ensure access to compute infrastructure under their control or perhaps under trusted allies’ control.

Global fragmentation and erosion of trust among democracies is bad. Nonetheless, a silver lining would be if this results in more competition. U.S. search engines Google and Bing came to dominate web search globally, but Baidu (in China) and Yandex (in Russia) did well locally. If nations support domestic champions — a tall order given the giants’ advantages — perhaps we’ll end up with a larger number of thriving companies, which would slow down consolidation and encourage competition. Further, participating in open source is the most inexpensive way for countries to stay at the cutting edge.

Last week, at the World Economic Forum in Davos, many business and government leaders spoke about their growing reluctance to rely on U.S. technology providers and desire for alternatives. Ironically, “America first” policies might end up strengthening the world’s access to AI.

[Original text: deeplearning.ai/the-batch/is… ]

출처: https://www.deeplearning.ai/the-batch/issue-338/">deeplearning.ai/the-batch/is…

점수: 7/10 — 점수 7/10: anthropic

[7/10] RT by @hwchase17: A couple weekends ago I attended the @sotalikesfuture x @ARIA_

RT by @hwchase17: A couple weekends ago I attended the @sotalikesfuture x @ARIA_research hackathon.

Challenge 1: build a trustworthy multi-agent network. Agents that can identify each other, prove wh

A couple weekends ago I attended the @sotalikesfuture x @ARIA_research hackathon.

Challenge 1: build a trustworthy multi-agent network. Agents that can identify each other, prove who sent what, delegate tasks with limits, and reject anything outside the trusted network.

The challenge mapped almost perfectly to what we'd been building at Kanoniv. Identity and trust for AI agents. The hackathon didn't inspire the idea, it confirmed the ecosystem needs it now.

So we shipped it.

Today we're open-sourcing kanoniv-agent-auth.

Every agent gets its own identity and keypair. Agents can delegate tasks to sub-agents with scope and budget caps, and authority narrows at each level, never widens. Every tool call is signed and verified before it executes. Revoke an agent's access and it takes effect immediately, no unwinding. Full audit trail on every action. Who did what, who authorized it, how deep in the chain.

Rust, TypeScript, Python. Same behavior across all three. MIT licensed.

github.com/kanoniv/agent-aut…

Shoutout to @ObadiaAlex and the team for organizing. The challenge was exactly the right question at the right time.

If you're building with agents that call tools, spend money, or talk to other agents, try it, tell me what breaks.

출처: https://nitter.net/sotalikesfuture"

점수: 7/10 — 점수 7/10: multi-agent

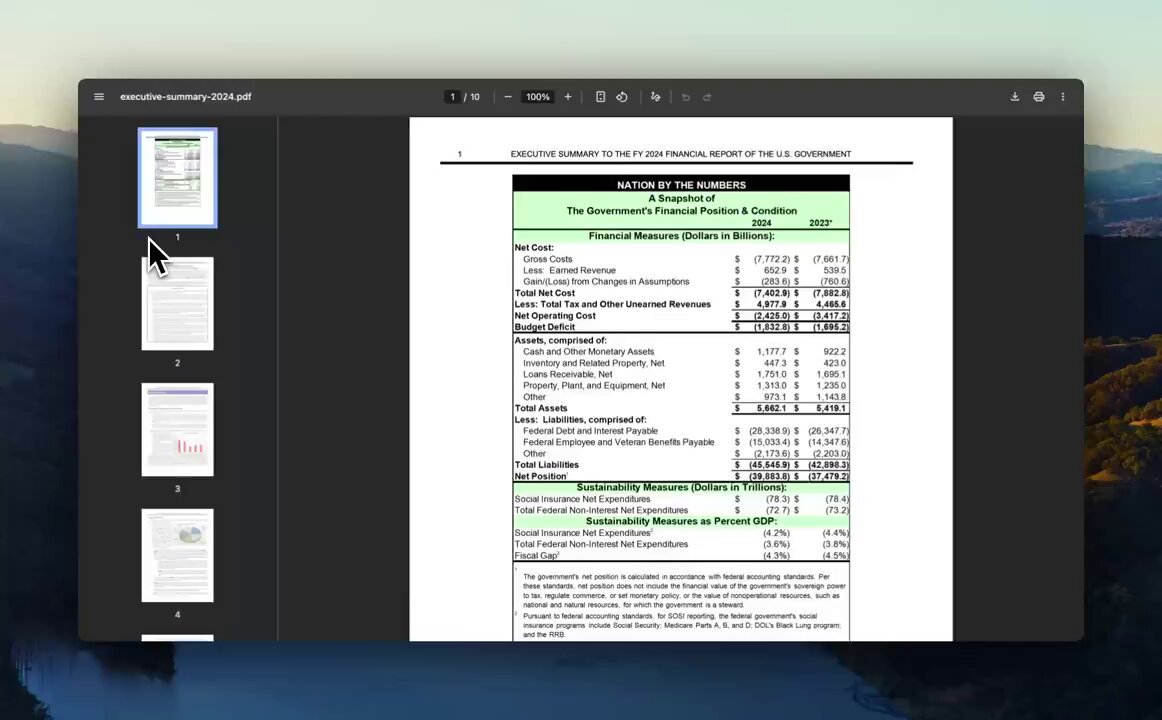

[7/10] We are really good at understanding your complex documents with charts 📈📊 Line

We are really good at understanding your complex documents with charts 📈📊

Line charts, bar charts, pie charts, and more!

We use VLMs tuned to render charts into highly accurate markdown, beyond wha

We are really good at understanding your complex documents with charts 📈📊

Line charts, bar charts, pie charts, and more!

We use VLMs tuned to render charts into highly accurate markdown, beyond what the frontier models (openai, anthropic, gemini) are tuned for.

ICYMI check out @tuanacelik's tutorial: developers.llamaindex.ai/pyt…

Sign up to LlamaParse: cloud.llamaindex.ai/?utm_sou…

Video

Tuana (@tuanacelik)

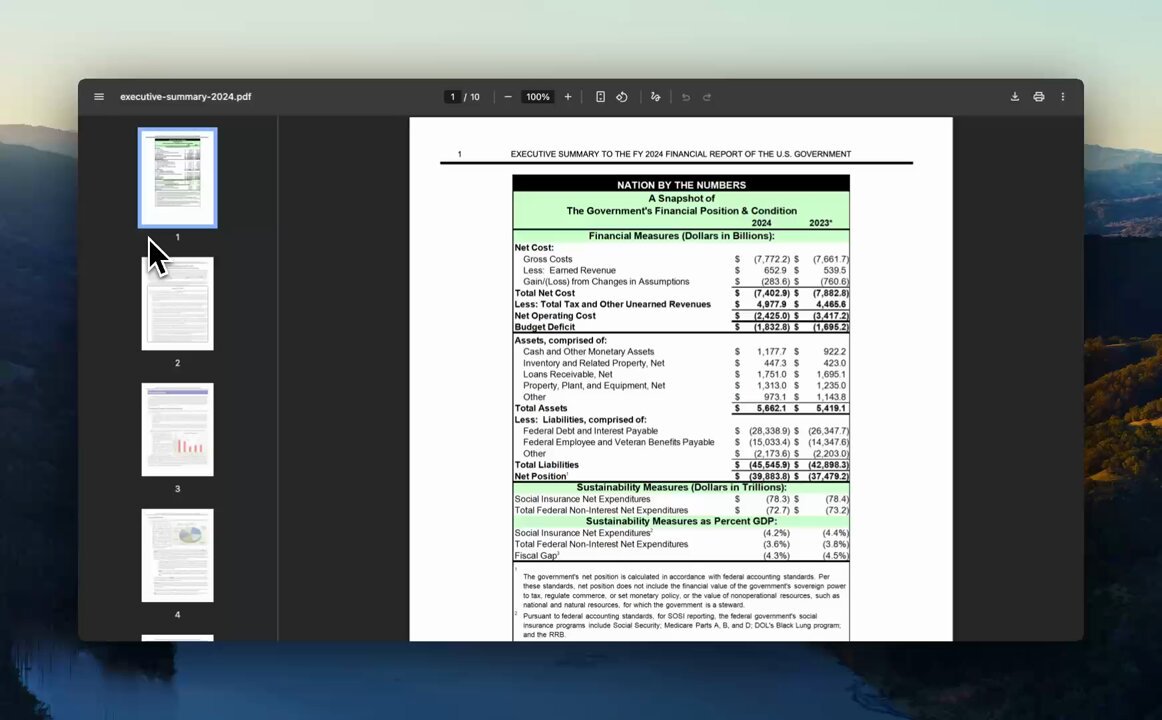

Since joining @llama_index, my focus has shifted from 'everything agents' to 'document agents' : agents that can handle work over all manner of complex documents. So, I tried out the latest chart parsing capabilities of LlamaParse.

Charts in PDFs are notoriously painful to work with. You can see the data ) bars, axes, labels) but actually getting it into a format you can analyze means is a different matter.

I tried out parsing a U.S. Treasury executive summary PDF, pulling a grouped bar chart showing Budget Deficit vs. Net Operating Cost for fiscal years 2020–2024, and turning it into a pandas DataFrame you can run analysis on (although really you can then do whatever, provide it for downstream tasks to an agent..)

Once parsed, the chart's underlying data comes back as a table in the items tree for that page. From there: grab the rows, construct a DataFrame, etc. In the example, I'm computing year-over-year changes in both metrics, measuring the gap between them across the five-year window, and just to be sure, I reproduced a bar chart that mirrors the original PDF visualization.

You can try it our here: colab.research.google.com/dr…

Video

agent_orchestration ron agent

출처: https://nitter.net/tuanacelik"

점수: 7/10 — 점수 7/10: anthropic

[7/10] R to @AnthropicAI: Frontier models are now world-class vulnerability researchers

R to @AnthropicAI: Frontier models are now world-class vulnerability researchers, but they’re currently better at finding vulnerabilities than exploiting them.

This is unlikely to last. We urge devel

Frontier models are now world-class vulnerability researchers, but they’re currently better at finding vulnerabilities than exploiting them.

This is unlikely to last. We urge developers to redouble their efforts to make software more secure.

Read more: anthropic.com/news/mozilla-f…

출처: https://www.anthropic.com/news/mozilla-firefox-security">anthropic.com/news/mozilla-f…

점수: 7/10 — 점수 7/10: anthropic

[7/10] R to @AnthropicAI: And you can find links to all relevant RSP documents, includi

R to @AnthropicAI: And you can find links to all relevant RSP documents, including the initial Frontier Safety Roadmap and the initial Risk Report, here: https://anthropic.com/responsible-scaling-poli

And you can find links to all relevant RSP documents, including the initial Frontier Safety Roadmap and the initial Risk Report, here: anthropic.com/responsible-sc…

출처: https://anthropic.com/responsible-scaling-poli

점수: 7/10 — 점수 7/10: anthropic

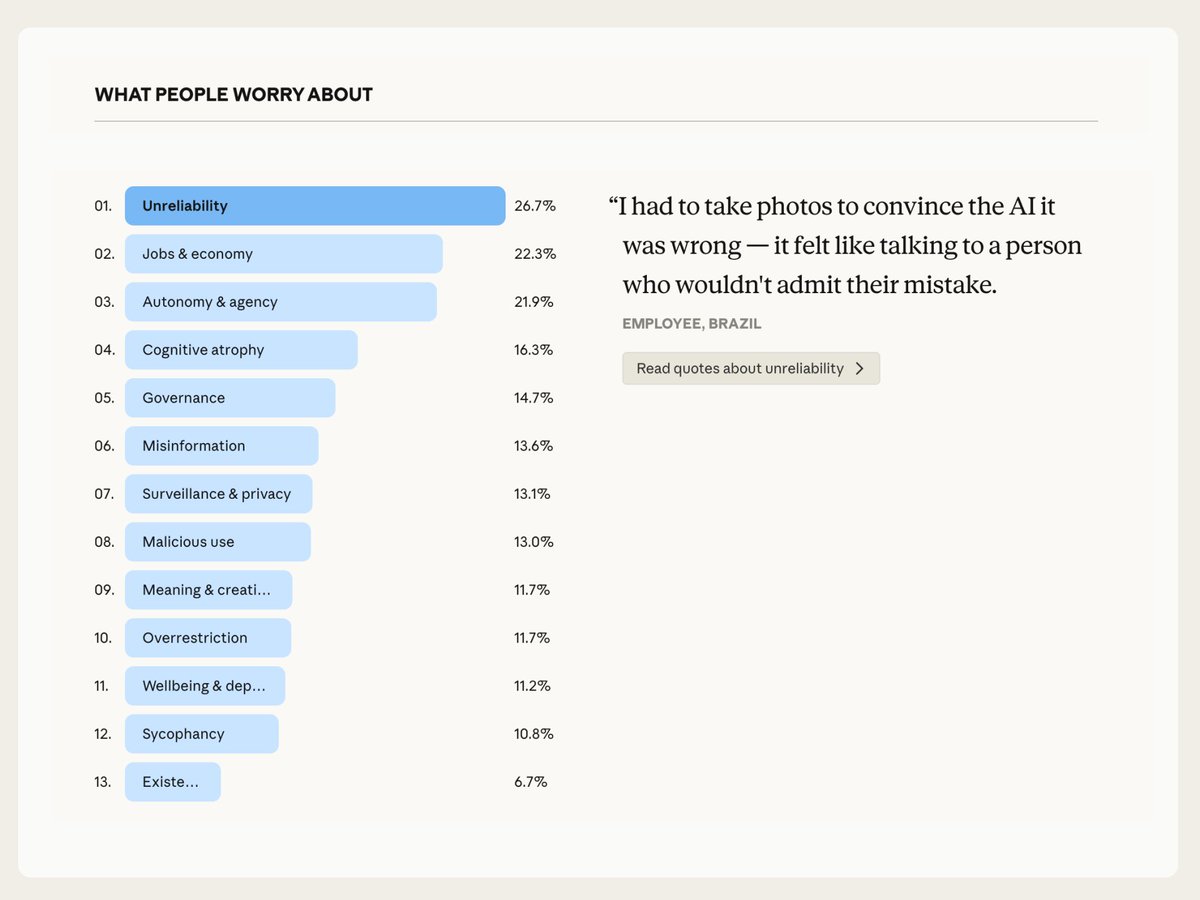

[7/10] R to @AnthropicAI: Hopes clustered around a few basic desires, but concerns abou

R to @AnthropicAI: Hopes clustered around a few basic desires, but concerns about AI were more varied. Most common were AI unreliability, jobs and the economy, and maintaining human autonomy and agenc

Hopes clustered around a few basic desires, but concerns about AI were more varied. Most common were AI unreliability, jobs and the economy, and maintaining human autonomy and agency.

Notably, economic concern was the strongest predictor of overall AI sentiment.

agent_orchestration ron

agent_orchestration ron

출처: https://nitter.net/pic/media%2FHDtIQOQaUAAnnyo.jpg"

점수: 7/10 — 점수 7/10: anthropic

[6/10] New course: Gemini CLI: Code & Create with an Open-Source Agent, built with @goo

New course: Gemini CLI: Code & Create with an Open-Source Agent, built with @googlecloudtech/@geminicli and taught by @JackWoth98.

Agentic coding assistants like Gemini CLI are transforming how devel

New course: Gemini CLI: Code & Create with an Open-Source Agent, built with @googlecloudtech/@geminicli and taught by @JackWoth98.

Agentic coding assistants like Gemini CLI are transforming how developers work. This short course teaches you to use Google's open-source agent to coordinate local tools and cloud services for coding and non-coding workflows.

Gemini CLI works from your terminal, so it works with your local files and development tools. You can also connect it to services through MCP. Then provide high-level instructions, and it autonomously plans and executes complex workflows.

Skills you'll gain:

- Build website features and automate code reviews with GitHub ActionsCreate data dashboards that combine local files with cloud data sources

- Use MCP servers and extensions to orchestrate workflows across GitHub, Canva, and Google Workspace

- Generate social media content from multimedia files like conference recordings

I particularly appreciate that Gemini CLI is open-source. You can see exactly how it works, read the prompts it uses, and understand its architecture. The community has contributed thousands of pull requests. Since Gemini 3’s release I've found Gemini CLI highly capable - this is a tool worth having in your toolbox!

Whether you're prototyping applications, automating workflows, or working with multimedia content, join to learn to delegate complex tasks and build faster: deeplearning.ai/short-course…

Video

agent_orchestration orches mcp server agent

agent_orchestration orches mcp server agent

출처: https://nitter.net/googlecloudtech"

점수: 6/10 — 점수 6/10: mcp

[6/10] R to @AnthropicAI: We’re now separating the safety commitments we’ll make unilat

R to @AnthropicAI: We’re now separating the safety commitments we’ll make unilaterally and our recommendations for the industry.

We’re also committing to publish new Frontier Safety Roadmaps with de

We’re now separating the safety commitments we’ll make unilaterally and our recommendations for the industry.

We’re also committing to publish new Frontier Safety Roadmaps with detailed safety goals, and Risk Reports that quantify risk across all our deployed models.

점수: 6/10 — 점수 6/10: anthropic

[6/10] We hosted an executive dinner at NVIDIA GTC with @Modular. 600+ people signed u

We hosted an executive dinner at NVIDIA GTC with @Modular.

600+ people signed up, and we had to turn away more than 500. We had a packed house 🔥

This is one of the only dinners where we discuss the

We hosted an executive dinner at NVIDIA GTC with @Modular.

600+ people signed up, and we had to turn away more than 500. We had a packed house 🔥

This is one of the only dinners where we discuss the impact of AI agents across the entire stack - from infrastructure/systems to context and agentic engineering. Some of the topics we discussed:

- Is the future composed of general models or specialized models?

- Are agents a systems problem or model problem?

- How are non-technical users adopting agent tooling like Claude Cowork?

- Where do humans spend the most time on repetitive document work?

Massive thank you to the Modular team for partnering with @llama_index on this, and to every single person who showed up.

To the 500+ on the waitlist - next time we're getting a bigger venue.

agent_orchestration agent

agent_orchestration agent

출처: https://nitter.net/Modular"

점수: 6/10 — 점수 6/10: claude

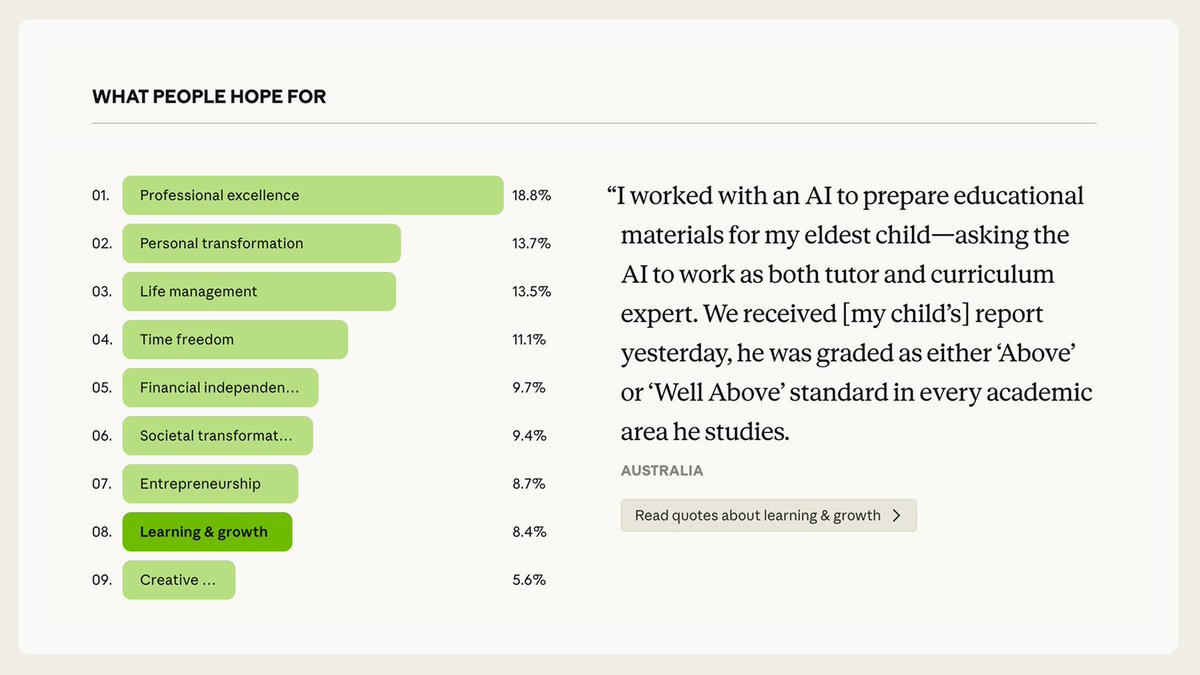

[6/10] R to @AnthropicAI: What do people most want from AI? Roughly one third want AI

R to @AnthropicAI: What do people most want from AI?

Roughly one third want AI to improve their quality of life—to find more time, achieve financial security, or carve out mental bandwidth. Another q

What do people most want from AI?

Roughly one third want AI to improve their quality of life—to find more time, achieve financial security, or carve out mental bandwidth. Another quarter want AI to help them do better and more fulfilling work.

self_improvement improve

self_improvement improve

출처: https://nitter.net/pic/media%2FHDtICzVagAAgD52.jpg"

점수: 6/10 — 점수 6/10: anthropic